Posts

440Following

101Followers

4735Matthias Endler

mre@mastodon.socialNew live episode of Rust in Production and it's a special one! 🥁

Recorded on stage at Rust Week in Utrecht with two people shaping the future of the Linux kernel:

🐧 Greg Kroah-Hartman (@gregkh ), Linux Foundation Fellow

⚙️ Alice Ryhl, core maintainer of Tokio, Rust for Linux at Google

▶️ https://corrode.dev/podcast/s06e04-rust4linux/

Huge thanks to the Rust Week crew for hosting this one. You're awesome! 🦀

Anisse

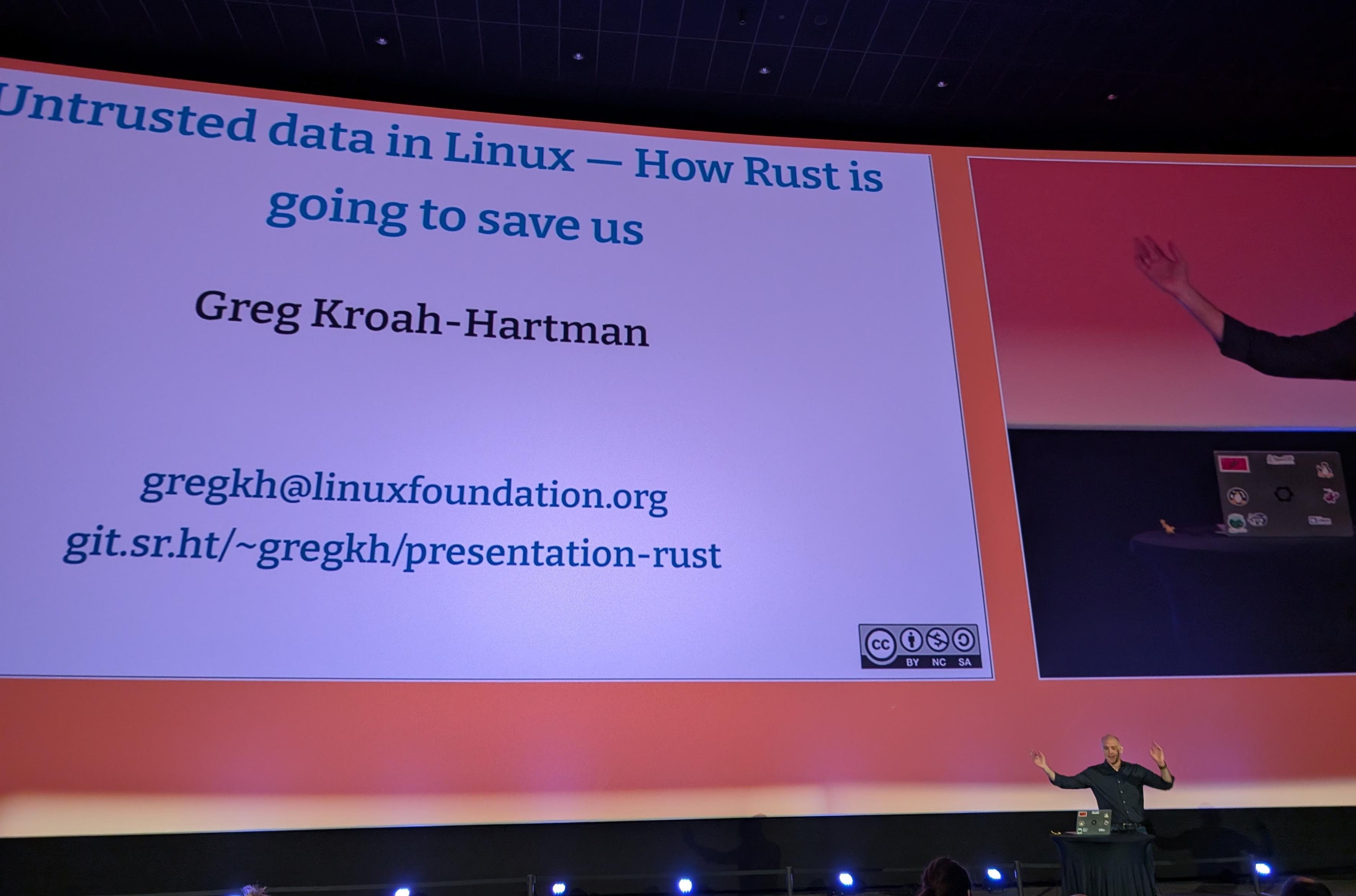

Aissen@treehouse.systemsFirst talk of the day with Greg @gregkh KH to talk about Untrusted data in in Linux : How Rust is going to save us.

Anisse

Aissen@treehouse.systemsI'm at the recording for the Rust In Production podcast on "Oxidizing the Linux Kernel" with Greg @gregkh KH, Alice Rhyl and Matthias @mre Endler.

#RustWeek #RustWeek2026 #LinuxKernel #RustLang #RustForLinux

Greg K-H

gregkhhttps://2026.rustweek.org/schedule/tuesday/

Live streams of the conference:

https://www.youtube.com/@rustnederlandrustnl/streams

I think the podcast might be streamed here as well:

https://www.youtube.com/playlist?list=PLbWDhxwM_45lkJfL95zELDgO01mnrRQ6t

but don't really know...

Greg K-H

gregkh"If Linux can be maintained by sending patches to an email mailing list, 'doesn’t work at scale' arguments are skill issues."

https://dbushell.com/2026/04/29/github-is-sinking/

Hoshino Lina (星乃リナ) 🩵 3D Yuri Wedding 2026!!!

lina@vt.socialTypical ML argument: "If I can read something legally, why can't I train an LLM on it?"

Humans are capable of reading things and later writing a similar thing that is still a copyright violation. If I go and write a book that follows the plot line of Star Wars, that's still a copyright violation, even if no text is literally the same. If I play the melody to a song on my piano and release it without the appropriate mechanical cover license, that's also a copyright violation.

The reason this does not happen often is that, as humans, we are aware that that's plagiarism and there are rules. Sometimes it happens by accident, and people still get sued and lose.

LLMs have no such awareness and routinely output things which are blatant copyright violations when appropriately prompted. That means the model weights encode that work, and therefore, are themselves a derivative work.

Your brain encodes a massive amount of copyrighted information. You are not a walking copyright violation because humans aren't data, can't be copied and distributed en masse, have human rights, etc. This is why "mind reading machines" are a classic dystopian plot point (monetizing your thoughts etc).

An LLM is not a human, does not have human rights, nor human privileges. It is data, and if it encodes copyrighted information, that's a derivative work. If you aren't following the license of the training data, that's a copyright violation.

Greg K-H

gregkh"The Kernel assigns lots of CVEs. They say it’s because they don’t really know how the Kernel is being used, so they err on the side of caution. Companies hate this because they have to deal with a lot of CVEs. Does the Kernel do this because it’s easier or do they have some sort of secret nefarious reason? Probably because it’s just easier and they have zero downside to disclosing and moving on. "

RE: https://infosec.exchange/@joshbressers/116507930206819253

Michał "rysiek" Woźniak · 🇺🇦

rysiek@mstdn.socialA lot of people are apparently happily running a script clearly marked as a root exploit from some random website using curl | bash

Some do inspect the script, but then still run it using curl | bash anyway.

Incidentally, this very relevant blogpost about detecting curl | bash and serving different scripts based on that is almost exactly a decade old:

https://web.archive.org/web/20230318063325/https://www.idontplaydarts.com/2016/04/detecting-curl-pipe-bash-server-side/

Mike [SEC=OFFICIAL]

mike@chinwag.orgOnce again, my professional recommendation in response to the latest Linux kernel vulnerability in the news is that you should gather up all your electronic devices, cast them into the sea, and retreat to the woods.

Each night, gather your children and tell them tales of the Before Times when the hubris of humanity grew so large that we made idols of sand and spoke to them as equals. Remind them that the sand, of course, did not speak or think, but we imagined it could, and let it guide us to folly.

Should a stranger ever come to your village with a glowing rectangle, encourage the youth to beat them with sticks.

Jorge Castro

jorge@hachyderm.ioI was explaining how we built #bluefin with buildstream and bootc to a coworker and he goes.

"So you made Gentoo but cloud native."

And now I am never going to shut about it lol.

Greg K-H

gregkhhere it is after I cleaned up some of the horrid cable mess that had grown up around it.

Stefan Eissing

icing@chaos.socialWe now require proof of work before you can submit a #curl security report.

Like mowing @bagder 's lawn or washing his car.😌

Greg K-H

gregkhhttps://lore.kernel.org/lkml/2026042334-acutely-unadorned-e05c@gregkh/

As @axboe said the other day, we aren't expecting a box of chocolates:

https://lore.kernel.org/r/2f2c91cb-f20e-44eb-8ba3-2d5b3d649642@kernel.dk

but these past weeks have made me feel like someone owes a few of us kernel developers a bunch of whisky at the very least...

Greg K-H

gregkhSo, until I figure out how to wipe all gdm settings from the system (hint, I tried the "reset" option on gdm-settings and to blow away all dconf files that i could find on the disk, but odds are I missed something), I guess I'm now a KDE user until I move to a new system...

Thorsten Leemhuis (acct. 1/4)

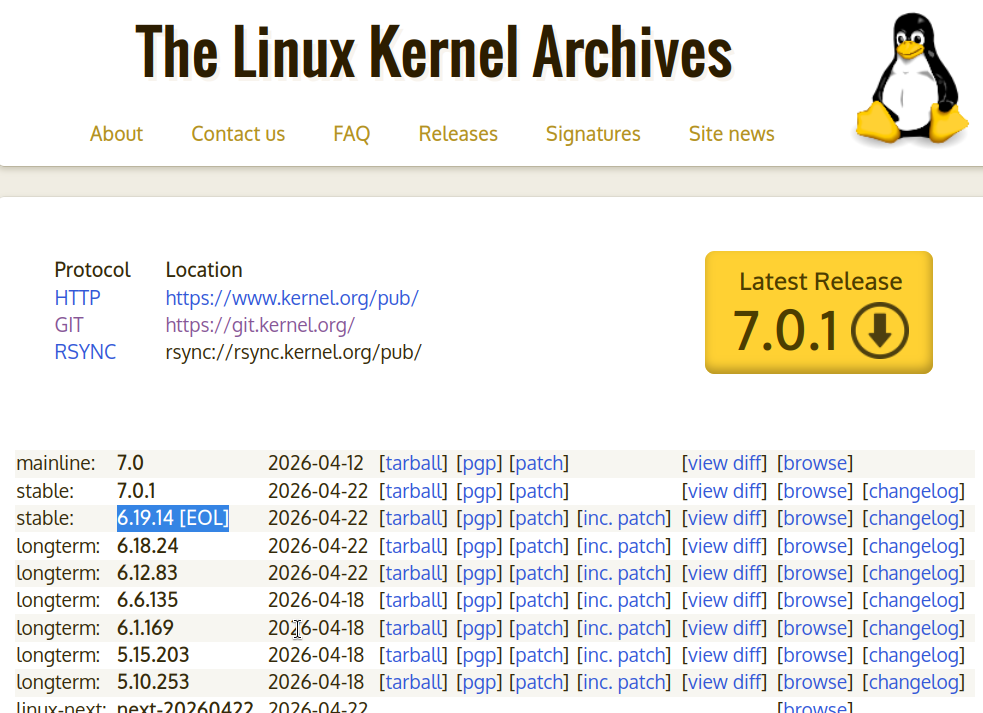

kernellogger@hachyderm.ioThe #Linux 6.19.y series is now end of life:

""This is the LAST 6.19.y kernel to be released, this branch is now end-of-life. Please move to the 7.0.y kernel branch at this point in time.""

https://lore.kernel.org/all/2026042220-coastline-flirt-ad3c@gregkh/

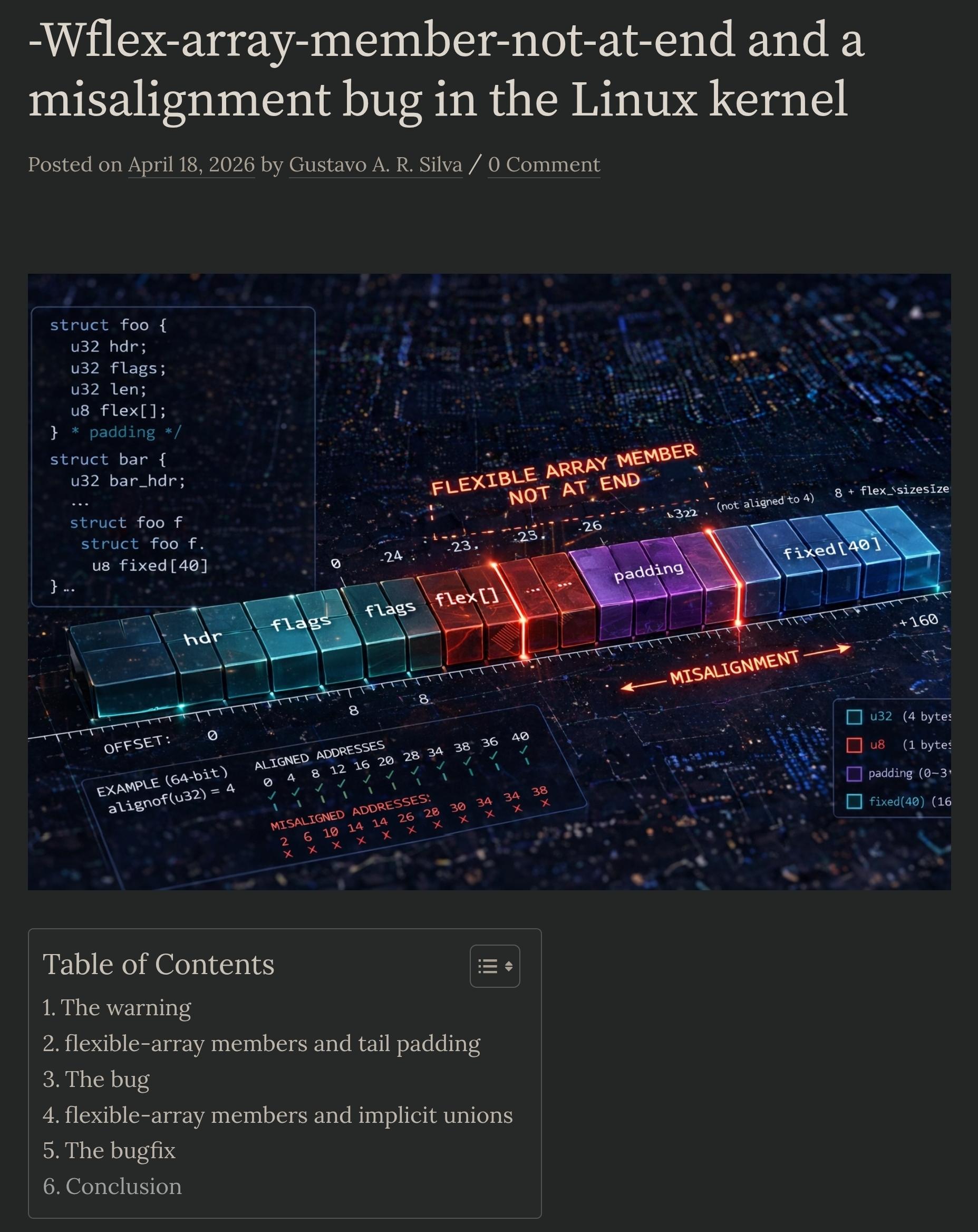

Gustavo A. R. Silva

gustavoars@fosstodon.org

"During one of my presentations at Open Source Summit Japan🇯🇵 the past year, I talked about a bug I found while addressing -Wflex-array-member-not-at-end issues in the Linux kernel. [...]

[...] not-at-end FAMs are a compiler extension that may cause undefined behavior, and compilers don't handle the sizes of objects containing them consistently. For this reason, they are now deprecated..[...]"🐧