Posts

197Following

421Followers

353benjojo

benjojo@benjojo.co.ukCor, RIPE just had a Brexit referendum moment

51.12% to a 48.88% vote

On a incredibly contentious topic that has been squabbled for years

Or 68 votes

This will surely not have any long running consequences to the mailing list arguments...

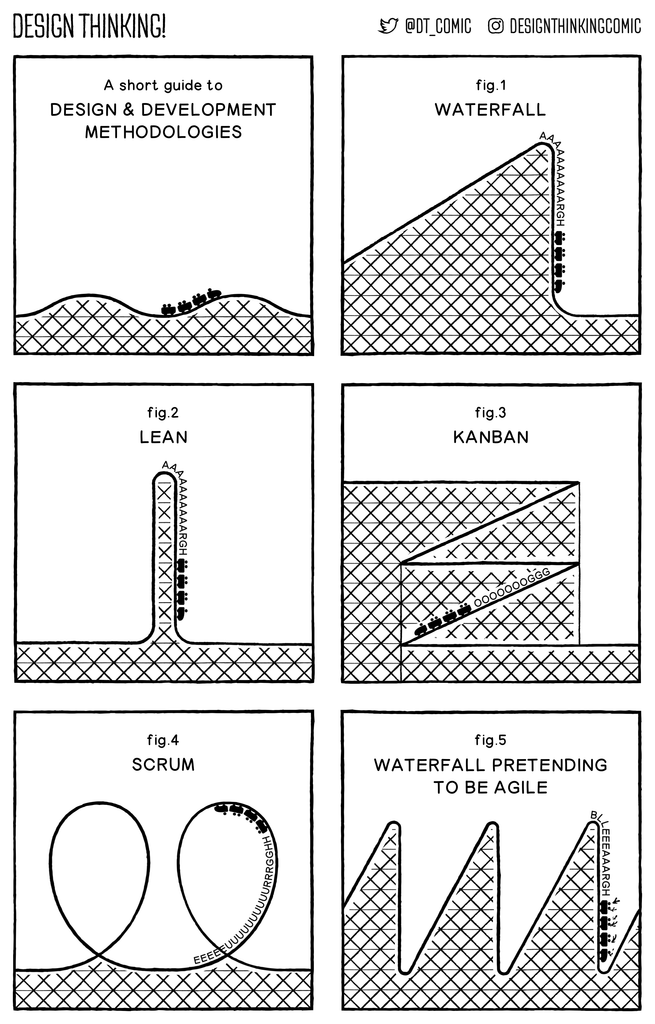

DESIGN THINKING! Comic

designthinkingcomic@mastodon.socialThis was always my most popular post on Twitter back in the day, so I thought I'd repeat it here once again...

#webcomics #comics

Preston von Gabbleduck

backupbear@aus.socialFor they were, all of them, deceived.

For the Dark Lord Sauron had embedded deep within his EULA the right to change the terms and conditions without notice

And once the users had become dependent on the service

He started increasing the cost of his tokens

Stephen Rosen

sirosen@mastodon.socialWe're hiring at my workplace.

If you are interested in working

- in a science-adjacent nonprofit

- in #python

- doing web backend and data engineering stuff

- *not using generative AI*

- remote work friendly

Please drop me a line! Your application won't skip the queue but I can give you a boost.

I rarely get a chance, since we're so small, but would love to help someone on here #GetFediHired !

Please feel free to boost for reach, or forward to your friends!

Jules she/her

afewbugs@social.coopI'm not entirely sure where I'm going with this, just thinking out loud, but I think it generally makes life more pleasant if you start out assuming everyone has good intentions but their brain may work in a completely different way to yours.

AnarchoNinaWrites

AnarchoNinaWrites@jorts.horseFwiw, computer people are starting to sound like climate scientists started to sound 5-6 years ago. You know, that whole "yeah whatever I fucking told you and you fucked around so you're all gonna find out - I'm going to get ice cream because NOTHING fucking matters anymore and we're all doomed."

That.

I think I understand why AI is SOCIALLY and ECONOMICALLY a fucking time bomb - but they understand why it's TECHNICALLY a time bomb too. And I don't like how dead man walking they all sound...

Aral Balkan

aral@mastodon.ar.alTalked to a software engineer at Microsoft working on Copilot Studio today at a social event and he said he was ashamed that he hadn’t written a single line of code in over three months. “I used to take pride in my work.” (They simply create plans in natural language and feed it to the LLM which generates the code. They can’t even do human code reviews anymore as there’s too much code being generated.)

He said a lot of them were waiting for a catastrophic event (something that would take down critical infrastructure) to get top management to reverse course. He seemed to think such a failure was very likely.

Given what we’ve been seeing recently, I tend to agree with him. Although I feel they will just double down. There’s too much money in the pot for them to fold.

I'll be teaching a course in the fall on data communication.

One of the assignments I hope to put together is a lesson on how data is manipulated. I want to show how easy it is for climate change deniers, anti vaxxers, etc to crop data, stretch or flip an axis and suggest the opposite of what the data is actually showing. Still thinking through the assignment and I'm thinking of having them make an honest representation and one less so.

I think there's value to such a lesson given how much downright lying we have from not just randos but even political circles these days.

Was just going to use publicly available data sources but then I am thinking that there must be researchers here who have awesome data they wouldn't mind seeing put into visual form. If you do have data you'd be willing to let me use, please drop me a comment or PM and let me know how to access it. Thanks!

(P.S. would appreciate a share for wider reach)

Tinker ☀️

tinker@infosec.exchangeI'm going to whisper this. So I'll choose my words carefully. I'll use general terms but am referring to specific multiple things. Please read between the lines.

Those of you that are doing things are having a good effect.

- The media is not covering you (this is a good sign. it means they dont want to bring attention to your actions).

- They are discussing you in board rooms and in decision making meetings.

- They are saying things like "we have to pause that project at that location because its currently drawing too much attention" and "we have to reframe this announcement to downplay that thing" and "we need to try these concessions to lower the heat a bit".

They are hoping you "get tired and bored and move to a new thing" so they can get back to work without interference.

Keep doing what you're doing. Join folks that are doing things if you aren't doing anything yet. Do what you can, as you can, when you can.

It is working.

Sebastian Schinzel

seecurity@infosec.exchangeRE: https://social.v.st/@quixoticgeek/116611731183393595

The background story of this is: academics increasingly use AI to assist in research and paper writing. This leads to flaws in the papers due to hallucinations, which are generally hard to detect, with an important exception: they are easy to detect for citations, because you can simply search for the cited work. If you find it, good. If you do not find it, or you find a paper strikingly similar, but with a slightly different title or different authors, etc: that's clearly a hallucination.

Arxiv published a policy banning all authors of a paper for one year if it contains evidence of LLM generated hallucinated content.

Now as a co-author of a paper, in the past, I generally did not check each and every citation. I contribute my parts of the writing, review stuff others wrote, but generally trust my co-authors that they know what they were writing. The reason is that we have spend weeks, months, sometimes years researching stuff together and this is just about writing it down.

The arxiv policy means that a single co-author can now cause substantial trouble for the rest. Being strict here is harsh, but is probably the right thing in the long run.

John Rogers

johnrogers.bsky.social@bsky.brid.gyI know you're sick of it, but the key to political messaging is repetition: A billion dollars is the socio-economic equivalent of a loose nuke, and we should work to prevent the acquisition of the former with the same urgency and ruthlessness we use to prevent the acquisition of the latter.

allison

aparrish@friend.campi used the same data set but replaced each country with a "gender identity" (man, woman, trans woman, trans man, non-binary) and prompted chatgpt to characterize the differences between the groups. lo and behold, i got some fantastic gender stereotype trash

Zalka Csenge Virág, PhD

TarkabarkaHolgy@ohai.socialPart of why I'm baffled and outraged by #AI is because I'm a traditional storyteller. The stories I tell are fascinating to me because they have been told by countless generations. Shaped by every single person who passed them on. In spoken word, person to person, retelling them in the moment with deep attention to their audience's moods and needs. The stories kept changing but they changed through human connection.

Stories are not "content" or "text". They are connection.

furrfu 🇪🇺

furrfu@mendeddrum.orgI saw someone mention this article titled "The quiet grief of adult friendship", but lost the original toot when the power went out.

It's a short but poignant read.

https://timesofindia.indiatimes.com/blogs/civil-irony/the-quiet-grief-of-adult-friendship/

Miguel de Icaza ᯅ🍉

Migueldeicaza@mastodon.socialThis is tragic, the fall of Bitwarden: https://blog.ppb1701.com/the-quiet-renovation-at-bitwarden

Thinking about the remarkable body of work that Sir Ian McKellen has produced - a career spanning 74 years - three quarters of a century! And yet this incredible resumé will forever be reduced to shouting at your family YOU SHALL NOT PASS whenever you find a big stick in the woods

Stefan Eissing

icing@chaos.socialRecommended read:

https://www.baldurbjarnason.com/2026/the-old-world-of-tech-is-dying/

Jonathan Corbet

corbetLSFMM remains one of the most intense, technically challenging, and interesting events in the kernel space, and this year's gathering didn't disappoint. It was, though, somewhat overshadowed by the rounds of layoffs happening in the industry. There were developers present who had lost their jobs, or feared losing their jobs, or were working for companies that have decreed that they are no longer interested in upstream development. That added to the general sense of darkness that overlays much of life these days.

Things will get better soon, right?

Denys Séguret

dystroy@mastodon.dystroy.orgHere's a strange situation:

thousands of #Rust developers use #bacon, #broot, #dysk, or #lazy-regex every single day — tools I wrote, maintain, and improve for free.

Their companies, though? None of them want to hire their author.

If you use my tools at work and your company does #Rust, I'd really appreciate a hand landing a job or freelance mission. A boost goes a long way. 🙏🦀

David Gerard

davidgerard@circumstances.runRE: https://infosec.exchange/@lcamtuf/116517194178120536

fucking up a craven license-washing rewrite? well I never