Posts

673Following

107Followers

129- Linux Kernel Developer @ Oracle (Linux Kernel MM) (2025.02 ~ Present)

- A slab subsystem co-maintainer and a reviewer for the reverse mapping subsystem

- Former Intern @ NVIDIA, SK Hynix, Panmnesia (Security, MM and CXL)

- B.Sc. in Computer Science & Engineering, Chungnam National University (Class of 2025)

Opinions are my own.

My interests are:

Memory Management,

Computer Architecture,

Circuit Design,

Virtualization

Harry (Hyeonggon) Yoo

hyeyoo

it is quite confusing that the floor index start from zero here in Zagreb

Harry (Hyeonggon) Yoo

hyeyoo

Edited 6 months ago

vibe-coded script for GCOV coverage visualization!

Hope this is helpful when I'm testing something or reviewing complicated code

Hope this is helpful when I'm testing something or reviewing complicated code

Harry (Hyeonggon) Yoo

hyeyoo

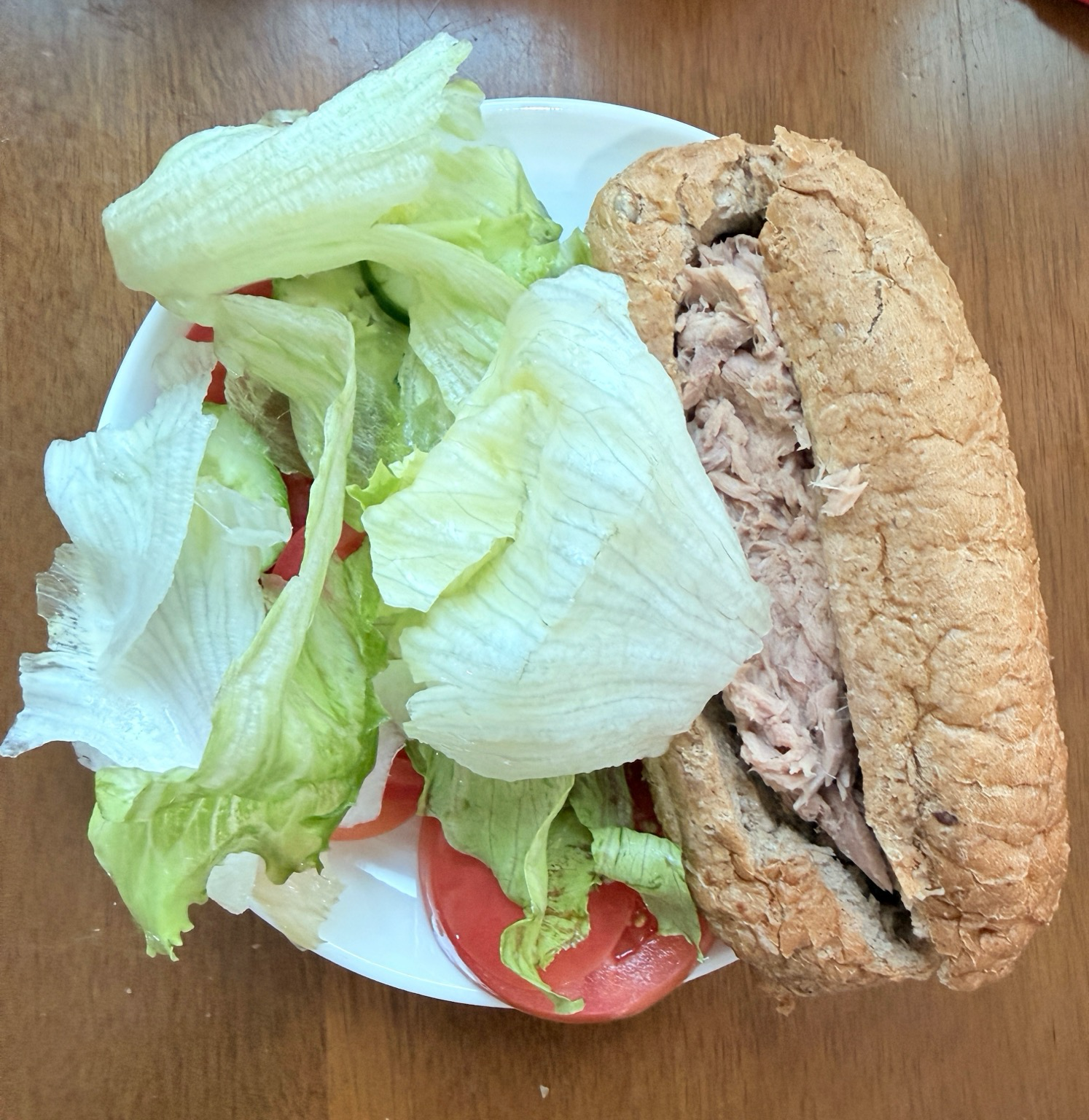

Pulled pork egg slice salad, since sandwich bread is on the way home :)

Harry (Hyeonggon) Yoo

hyeyoo

Edited 8 months ago

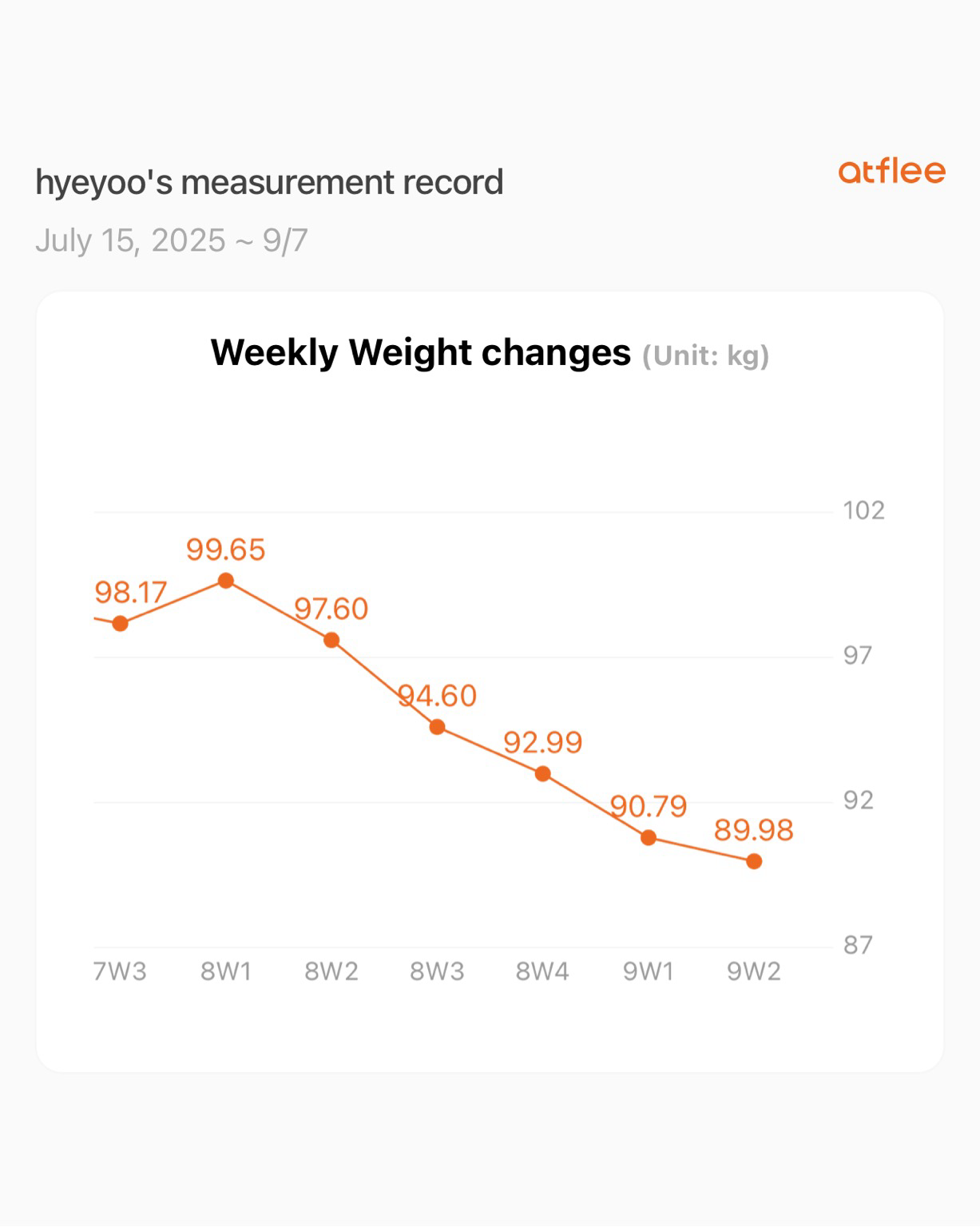

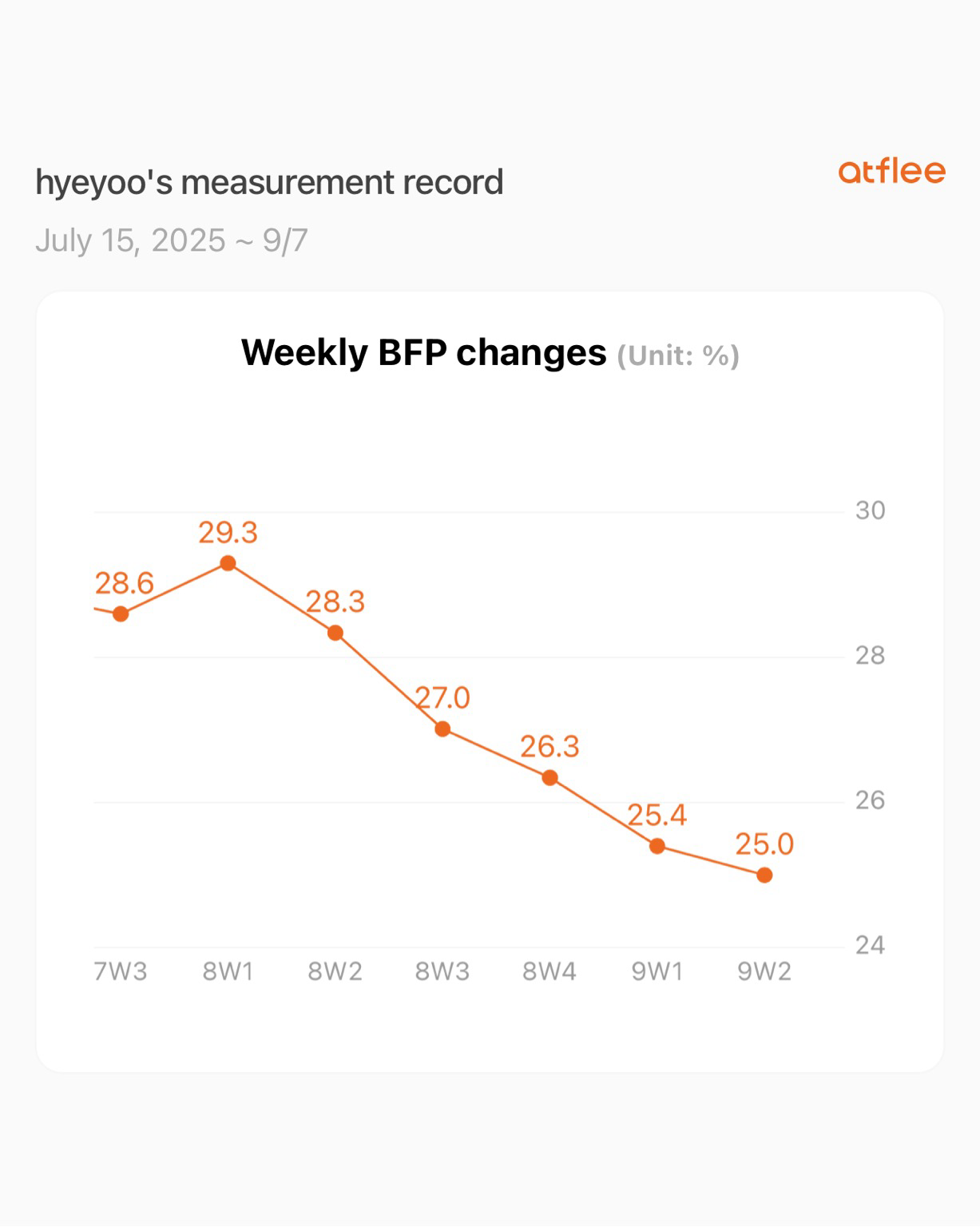

Here’s a progress update on my diet! It’s been five weeks now.

- Five workout sessions per week (focusing on losing fat)

- Eating only 2 sandwiches per day (most of the time!)

Target weight is about 70-75kg... so still a long long way to go.

- Five workout sessions per week (focusing on losing fat)

- Eating only 2 sandwiches per day (most of the time!)

Target weight is about 70-75kg... so still a long long way to go.

Harry (Hyeonggon) Yoo

hyeyoo

Edited 8 months ago

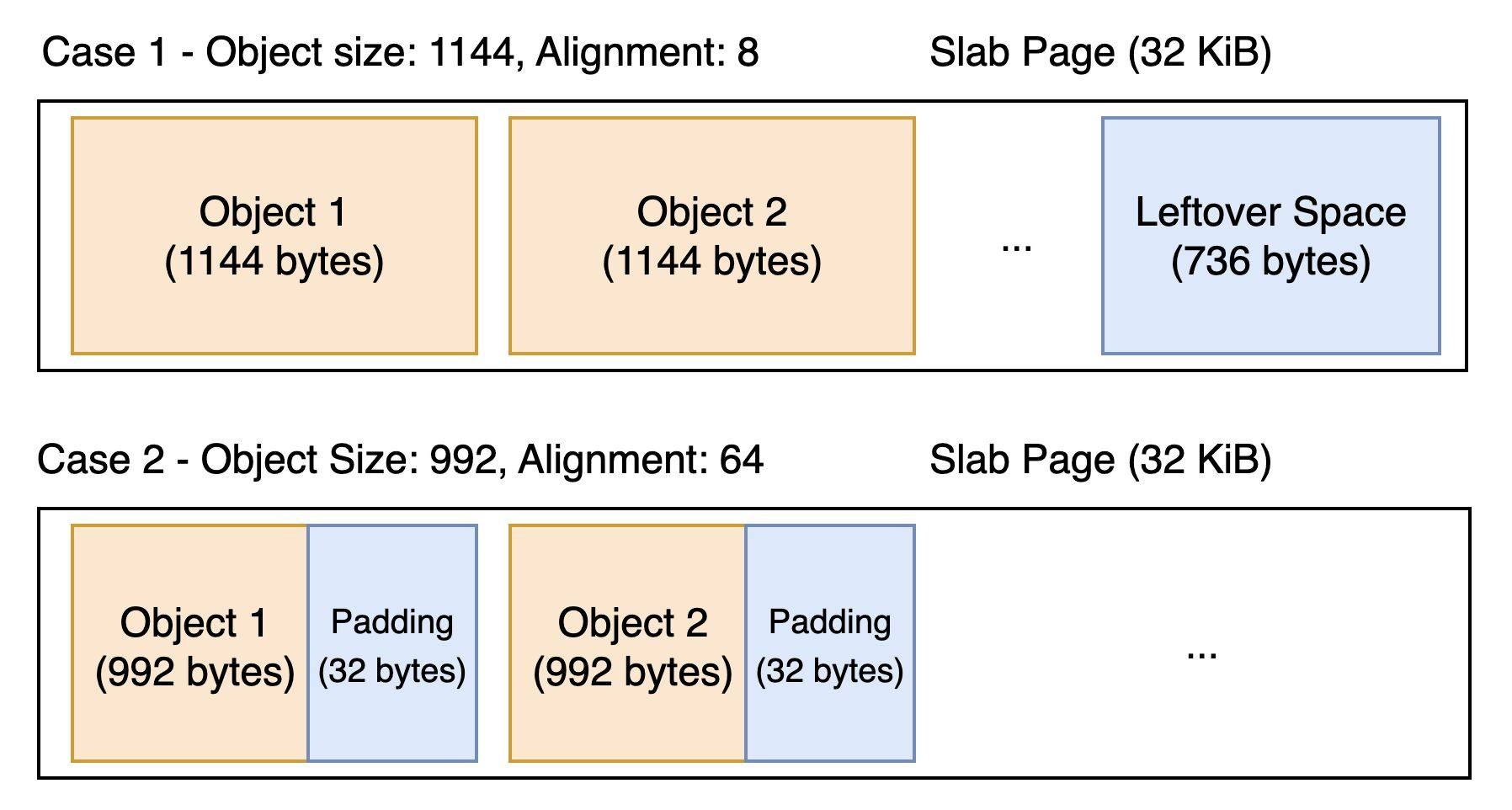

Working on saving 8 or 16 bytes per slab object in certain slab caches that fall into special cases, most notably 0.7%-0.8% or 1.5%-1.6% (depending on the configuration) memory savings for the inode cache (ext4 and xfs).

When memory cgroup and memory allocation profiling are enabled (the former being very common in production and the latter less so), the kernel allocates two pointers per object: one for the memory cgroup to which it belongs, and another for the code location that requested the allocation.

In two special cases, this overhead can be eliminated by allocating slabobj_ext metadata from unused space within a slab page:

- Case 1. The "leftover" space after the last slab object is larger than the size of an array of slabobj_ext.

- Case 2. The per-object alignment padding is larger than sizeof(struct slabobj_ext).

Thanks to @vbabka who suggested an excellent general approach to cover Case 1 and 2 with a minimal performance impact on the memory cgroup charging code (more details in the cover letter)

For these two cases, one or two pointers can be saved per slab object. Examples include the ext4 inode cache (case 1) and the xfs inode cache (case 2). That results in approximately 0.7-0.8% (memcg) or 1.5-1.6% (memcg + mem profiling) of the total inode cache size.

https://lore.kernel.org/linux-mm/20250827113726.707801-1-harry.yoo@oracle.com/

When memory cgroup and memory allocation profiling are enabled (the former being very common in production and the latter less so), the kernel allocates two pointers per object: one for the memory cgroup to which it belongs, and another for the code location that requested the allocation.

In two special cases, this overhead can be eliminated by allocating slabobj_ext metadata from unused space within a slab page:

- Case 1. The "leftover" space after the last slab object is larger than the size of an array of slabobj_ext.

- Case 2. The per-object alignment padding is larger than sizeof(struct slabobj_ext).

Thanks to @vbabka who suggested an excellent general approach to cover Case 1 and 2 with a minimal performance impact on the memory cgroup charging code (more details in the cover letter)

For these two cases, one or two pointers can be saved per slab object. Examples include the ext4 inode cache (case 1) and the xfs inode cache (case 2). That results in approximately 0.7-0.8% (memcg) or 1.5-1.6% (memcg + mem profiling) of the total inode cache size.

https://lore.kernel.org/linux-mm/20250827113726.707801-1-harry.yoo@oracle.com/

Harry (Hyeonggon) Yoo

hyeyoo

Edited 1 year ago

Blogging time after a long time.

/me again realizes that things not documented are quickly reclaimed from memory.

/me again realizes that things not documented are quickly reclaimed from memory.

Harry (Hyeonggon) Yoo

hyeyoo

Edited 1 year ago

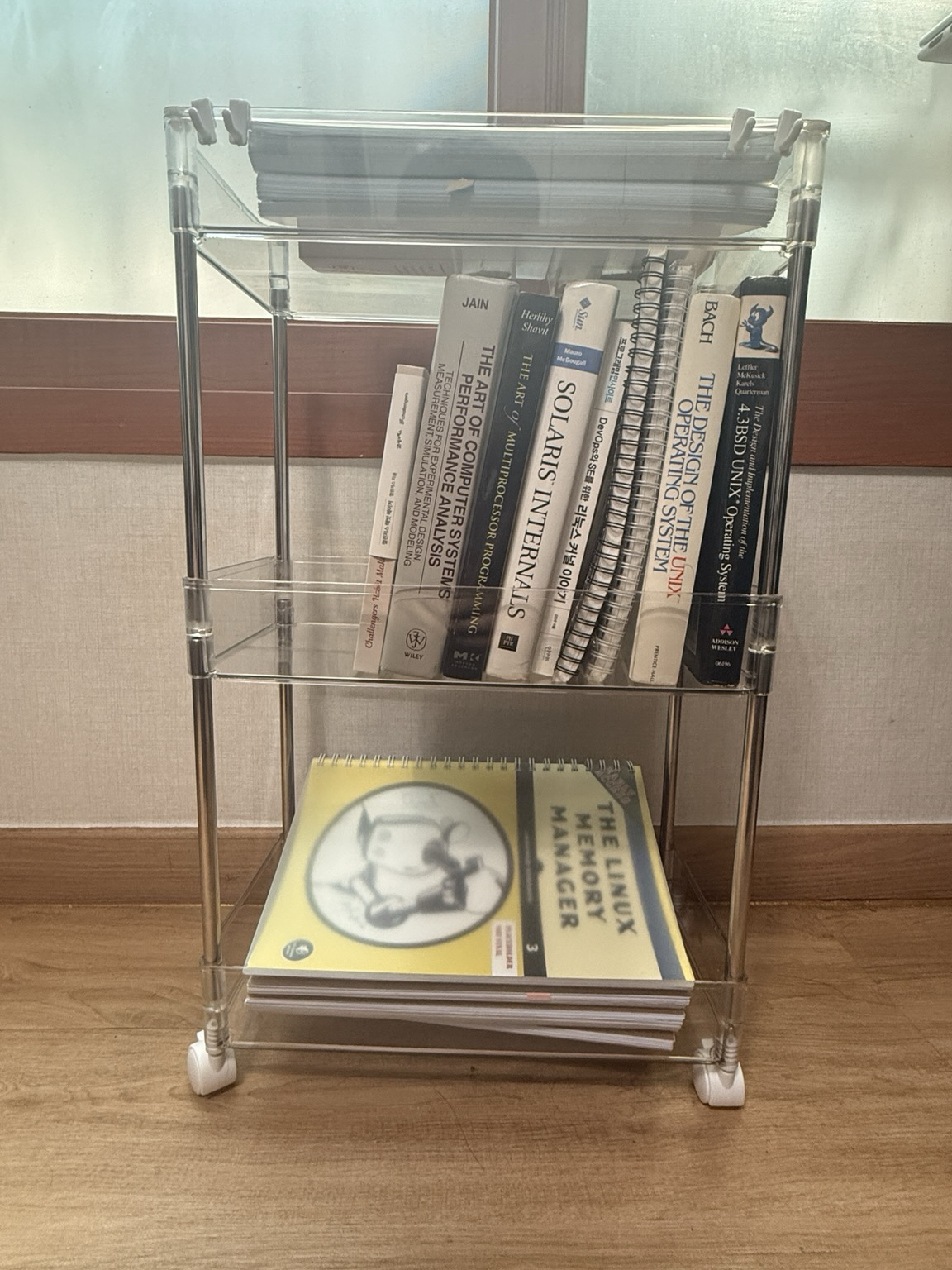

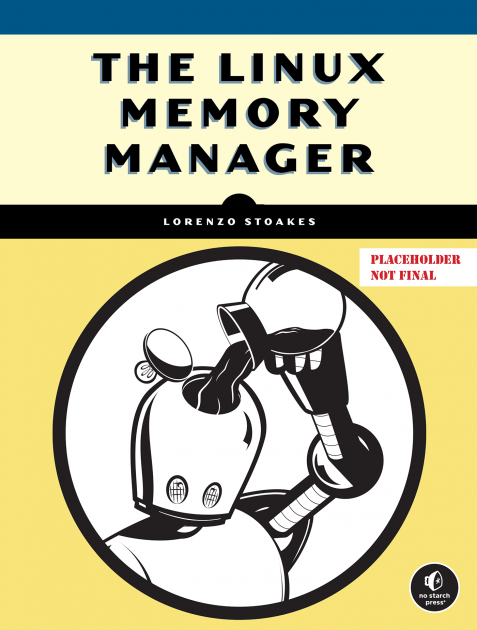

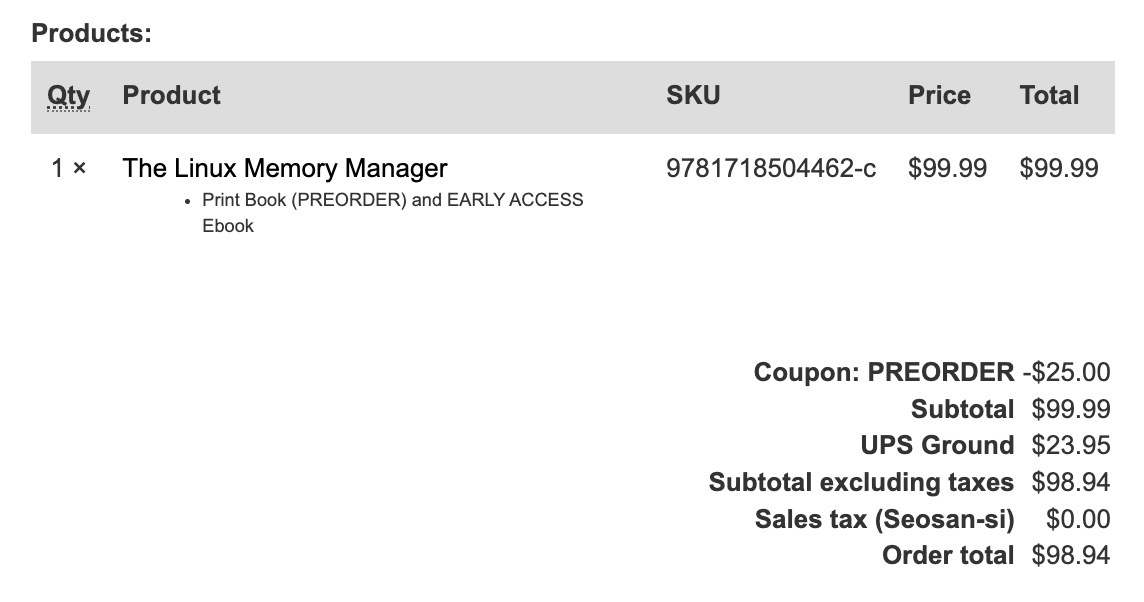

This book serves as a guiding light in navigating the increasingly complex memory management subsystem. A must-have book if you're interested in memory management!

I'mq glad to see the author's long effort finally paying off. Finally available for preorder 👏.

Already ordered one!

https://fosstodon.org/@ljs/114004492112728241

I'mq glad to see the author's long effort finally paying off. Finally available for preorder 👏.

Already ordered one!

https://fosstodon.org/@ljs/114004492112728241